Extending LogicMonitor with LogicMonitor: An IT Glue Integration

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

We talk a lot about LogicMonitor’s flexibility and extensibility, one reason is that our architecture makes it easy to write integrations to other tools’ APIs, while our API lets other tools talk to our platform. This also means you can make LogicMonitor talk to itself.

I was talking recently to a managed service provider with a problem. Across their various customer environments exist a range of resources and resource types, not all of which they are responsible for and therefore not all of which they want to monitor. While there are various ways to approach this, I also learned that their entire inventory exists within a well-known documentation platform IT Glue.

IT Glue has a well-documented API. LogicMonitor has a well-documented API. All I needed to do was to pull the data from the one system, check it matched what the customer wanted, and push it into the other. So far, so good… so what? There’s nothing ground-breaking in exchanging data between platforms using their respective APIs; it’s what our ServiceNow CMDB integration does and it’s what many other integration tools do.

However, many integrators exist outside LogicMonitor, and often as their own tool or platform, which can demand another system to maintain or subscribe to, another place to configure a schedule or trigger a synchronization, another place to receive alerts from, another place to report on and another place to review progress and actions taken.

If only there was some way to build this integration directly into LogicMonitor, such that it was wholly within the control of the platform, could be run on a schedule or on-demand, could alert on changes and failures within your existing monitoring workflows, and could produce a sensible, human-readable output of its activity over time…

Happily, there is. One of LogicMonitor’s superpowers is our extensibility, meaning we can add new monitoring integrations very rapidly, to accommodate new technologies as they appear. Amongst the options for integrations are ConfigSources, which as part of LM Config enable you to read, store and alert on textual data such as the startup or running configs from network devices. LM Config was created to enable the monitoring and backup of network device configurations, to massively reduce time to resolution for network issues caused by ill-advised (or worse) configuration changes.

However, ignoring that designed intention, ConfigSources more simply provide a way to run a script periodically (from hourly to daily) or on-demand, and to store whatever that script returns for alerting or review – all within the LogicMonitor platform.

This provides the ideal place to store and run my script without demanding any additional systems; furthermore, the script could contain an amount of intelligence and “self-awareness” (if not quite on a Skynet level), thanks to the ability of a ConfigSource to use environmental information from LogicMonitor.

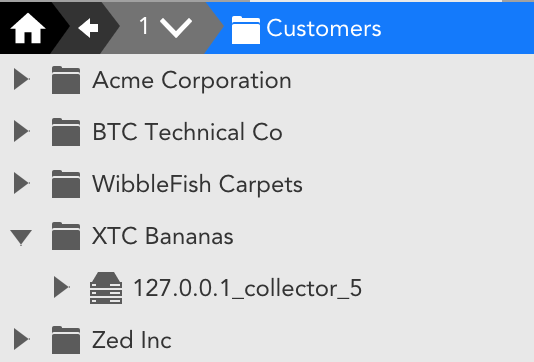

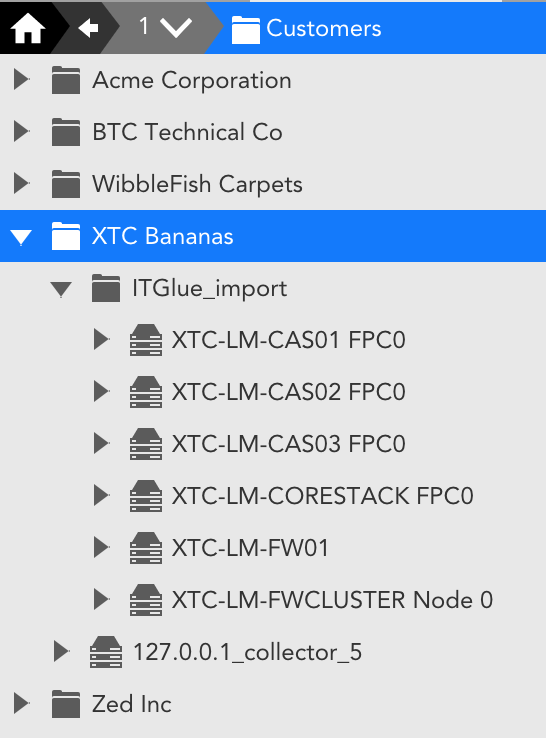

Being an MSP, as so many of our customers are, my customer was implementing a Resource Group structure of a high-level “Customers” group, and then subgroups within that for each of their clients. As each client environment is separate, they will also deploy at least one collector per client. This consistent approach means that my ConfigSource can be applied to all collectors anywhere within the “Customers” group and, once given API credentials for IT Glue and LogicMonitor, the script will do the rest.

First up, the script will determine the client name using the name of the subgroup its collector sits anywhere within; it will also create a dedicated import folder if it doesn’t already exist, using the LM API. Then, using the IT Glue API, it will find the organization that matches that client name, and from there all the resources linked to that organization. The script also checks for all resources currently monitored for the client in question.

With that combined knowledge, each result from IT Glue can be imported if new, or checked and updated if already in LogicMonitor. Further, user-configurable, filters can be easily created by setting resource properties in LogicMonitor, for example to only bring in specific resource types, and/or to exclude specific types. As properties can be set globally or per group, this means the same integration script can be used across all clients, whilst also providing the ability to import (or not) different resource types for different clients – for example clients on different service tiers or for whom certain resource types are or are not included within their contract with the MSP.

The resources are tagged with all of the CI attributes found in IT Glue, which may, for example, include a warranty expiry date, location data, support contact information, or whatever else has been added to the record in IT Glue. As these are then just like any other LogicMonitor resource properties, they can enrich alert and report data to enable recipients to easily see whether the resource is still in warranty, where it is, who’s responsible for it, or any other data the customer chooses. They can also be used to automatically group resources by type, location, support level, etc. and as they are periodically updated from the IT Glue record, any changes in IT Glue will be reflected in LogicMonitor.

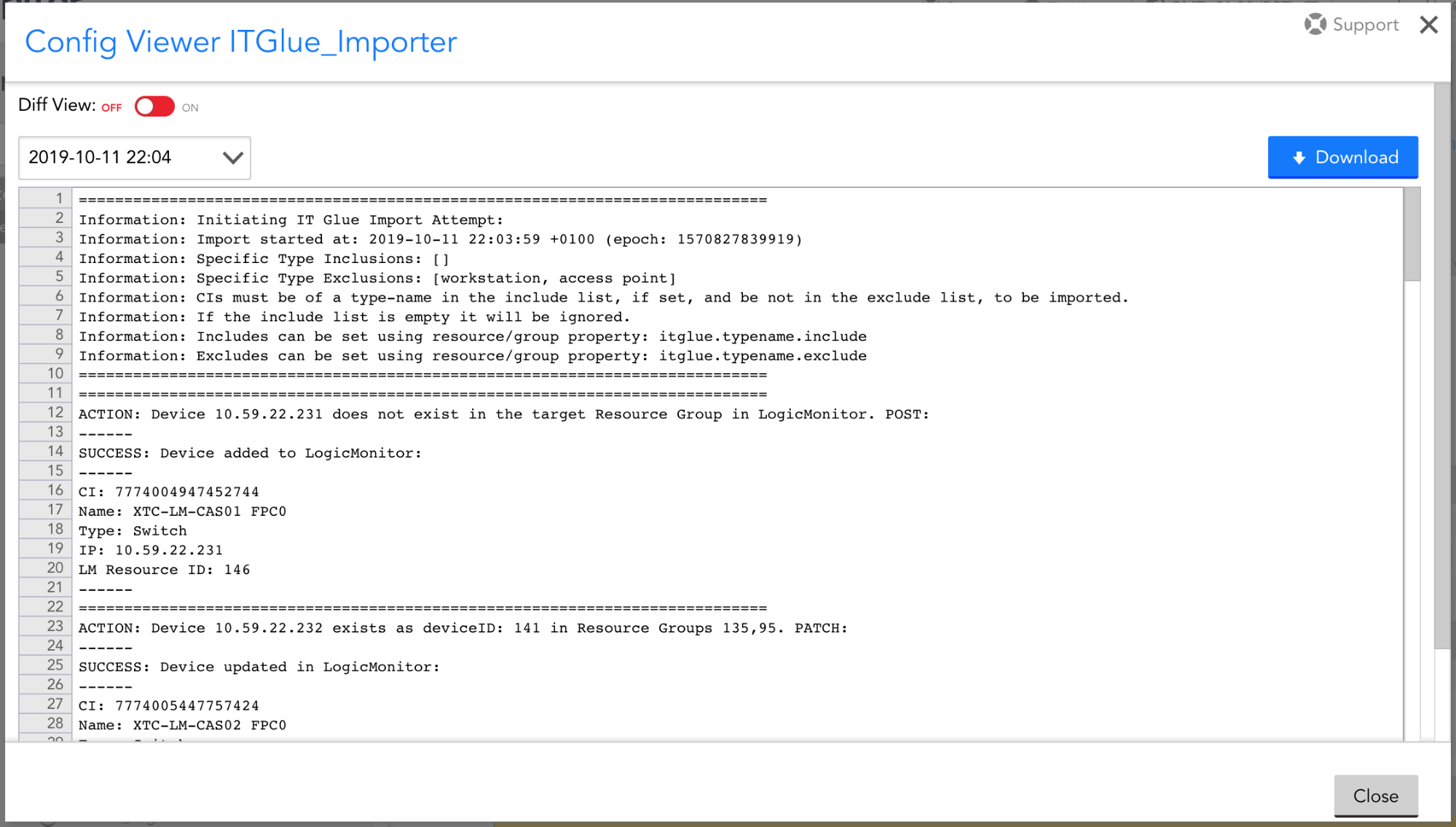

Finally, with the script being held within a ConfigSource, it writes a summary of actions for each run that is stored as if it were a config file. Each run that causes different outputs to the last – for example, new devices added – can, therefore, be alerted on, and the history of imports and synchronisations can be viewed in-platform in human-readable form. As well as being visible in LogicMonitor, those stored “config” records can be accessed by… you’ve guessed it… a call to our API. Maybe that’s one for another post. Interested in learning more? Access a free-trial or reach out to us to learn more about LogicMonitor’s integrations.

© LogicMonitor 2026 | All rights reserved. | All trademarks, trade names, service marks, and logos referenced herein belong to their respective companies.