Is Linux disk utilization on your monitoring dashboard?

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

Recently we rolled out a new release of LogicMonitor. Among the many improvements and fixes that users saw, there were also some backend changes to the Linux systems that store monitoring data.

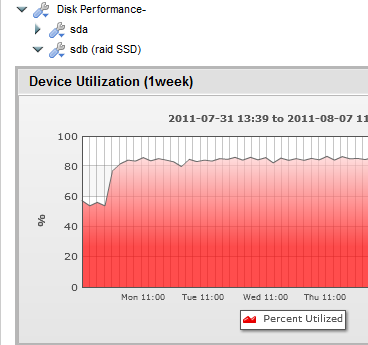

The rollout went smooth, no alerts were triggered – but it was pretty easy to see that something had changed:

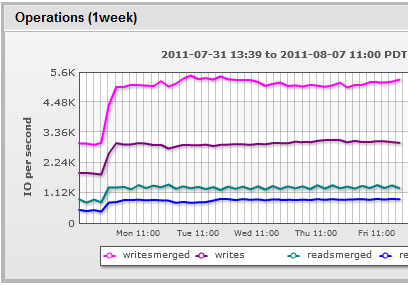

The amount of time the SSD drives were busy jumped dramatically. You can see why, as the amount of physical write operations to the disk (Writes, the graph below) and physical reads both almost doubled. And even for an array of solid state disks, that’s a lot of operations per second.

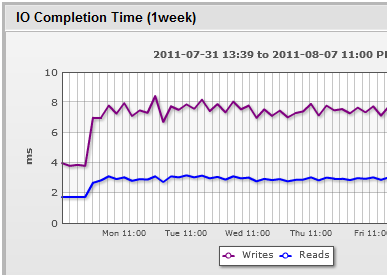

No alerts triggered, as the drive utilization was just below a warning threshold, and the IO completion time, while higher, was still plenty fast (especially on reads, which is what we alert on – writes are done asynchronously in the background by our app, so don’t impact user experience – but read time can.)

So while there is no immediate issue here, the change (a more aggressive cache management algorithm) clearly impacts our scalability for a given set of hardware. So it’s something we’re going to look at again.

The point I want to bring out, though, is that you need to eyeball graphs, regularly. Especially after releases. The fact that alerts did not trigger does not mean all is well – it just may mean you don’t have alerts on the right things. Create dashboards with your key metrics plotted (whether they be OS, application or database metrics), scan them regularly, and you’ll be able to easily spot anomalies. And then decide how to address them. (We create several dashboards, focusing on different classes of systems -high level, databases, storage, etc)

As a side note: why doesn’t LogicMonitor have automated anomaly detection that can “eyeball” such metrics for you and look for “unusual” changes? Well – because it’s hard. 🙂 Actually, it’s not that hard to make an anomaly detector – what is hard is making one that can sort through and detect meaningful changes from noise, and sending too many anomaly notifications. This is an area we’re still working on.

© LogicMonitor 2026 | All rights reserved. | All trademarks, trade names, service marks, and logos referenced herein belong to their respective companies.