How We Use Quarkus With Kafka in Our Multi-Tenant SaaS Architecture

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

Get the latest blogs, whitepapers, eGuides, and more straight into your inbox.

Your video will begin shortly

At LogicMonitor, we deal primarily with large quantities of time series data. Our backend infrastructure processes billions of metrics, events, and configurations daily.

In previous blogs, we discussed our transition from monolith to microservice. We also explained why we chose Quarkus as our microservices framework for our Java-based microservices.

In this blog we will cover:

LogicMonitor’s Metric Pipeline, where we built out multiple microservices with Quarkus in our environment, is deployed on the following technology stack:

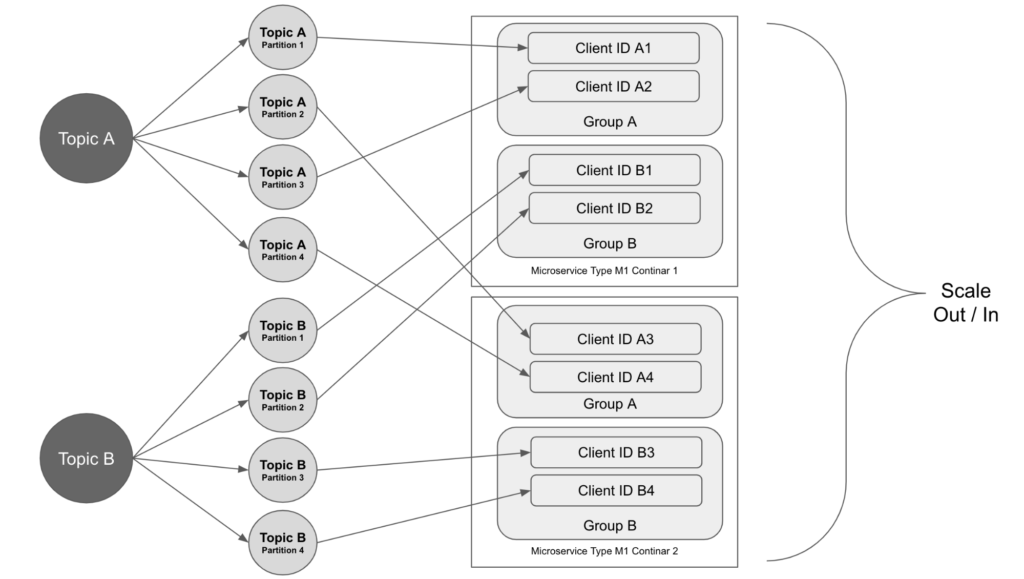

Our microservices use Kafka topics to communicate. Each microservice gets data messages from some Kafka topics and publishes the processing results to other topics.

Each Kafka topic is divided into partitions. The data messages of multiple tenants that are sharing the same Kafka cluster are sent to the same topics.

When a microservice publishes a data message to a partition of a Kafka topic, the partition can be decided randomly or based on a partitioning algorithm based on the message’s key. We chose to use some internal ID as the message key so that the time-series data messages from the same device can arrive at the same partition in order and be processed in that order.

The data on a topic across the multiple partitions sometimes can be out of balance. In our case, some data messages require more processing time than others and some partitions have more messages than the other partitions. This leads to some microservice instances falling behind. To solve this problem, we separated the data messages to different topics based on their complexity and configured the consumer groups differently. This allows us to scale in and out services more efficiently.

The microservice that consumes the data from the topic has multiple instances running and they are joining the same consumer group. The partitions are assigned to the consumers in the group and when the service scales out, more instances are created and join the consumer group. The partition assignments will be rebalanced among the consumers and each instance gets one or more partitions to work on.

In this case, the total number of partitions decides the maximum number of instances that the microservice can scale up to. When configuring the partitions of a topic, we should consider how many instances of the microservice that will be needed to process the volume of the data.

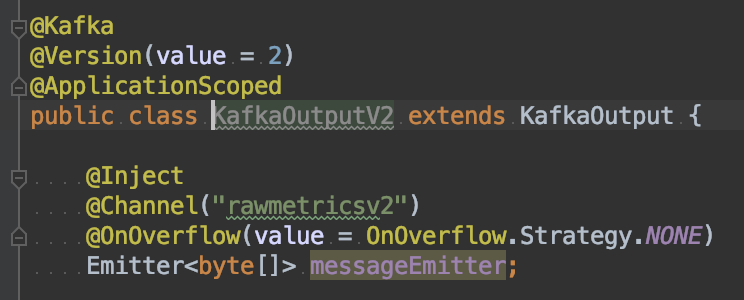

We started with Quarkus’s Kafka extension based on MicroProfile Reactive Messaging: smallrye-reactive-messaging-kafka. It’s easy to use and has great support for health checks.

We set up the Kafka producer with imperative usage. There are a couple of configurations that need to be adjusted to make sure the throughput of the producer is good, which we mentioned in our Quarkus vs Spring blog.

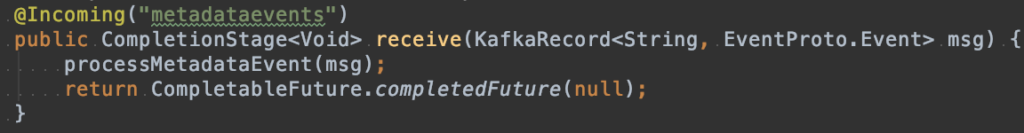

However, we did have some challenges while setting up the Kafka consumers. These are also mentioned in the Quarkus vs. Spring blog.

In the end, we chose to implement Kafka consumers with Apache Kafka Client. There have been multiple improvements added in Kafka support of MicroProfile Reactive Messaging, for example, allowing multiple consumer clients and supporting subscribing to topics by patterns. In the future, we may reevaluate the Kafka extension in Quarkus for the consumer implementation.

All of our microservices are deployed in Docker containers on Kubernetes clusters. In a Kubernetes cluster, multiple namespaces are used to divide the resources between the multiple tenants. Most of our microservices are deployed at the namespace level.

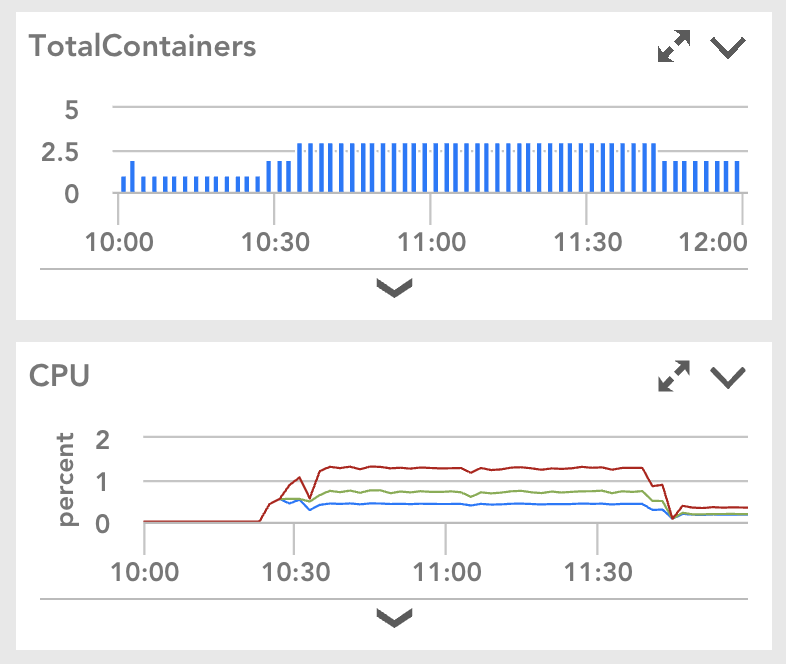

At the cluster level, the Kubernetes cluster can scale up and down by the Cluster Autoscaler. For each microservice in a namespace, we use the Horizontal Pod Autoscaler to automatically scale the number of container replicas based on the application CPU utilization. The number of replications of a microservice in a namespace is dependent on the load from the tenants belonging to that namespace. When the load goes up and CPU utilization of a microservice increases, more replicas of the service will be created automatically. Once the load goes down and the CPU utilization decreases, the number of replicas will scale down accordingly. Our next goal is to use the horizontal pod autoscaler with the custom metrics from the application.

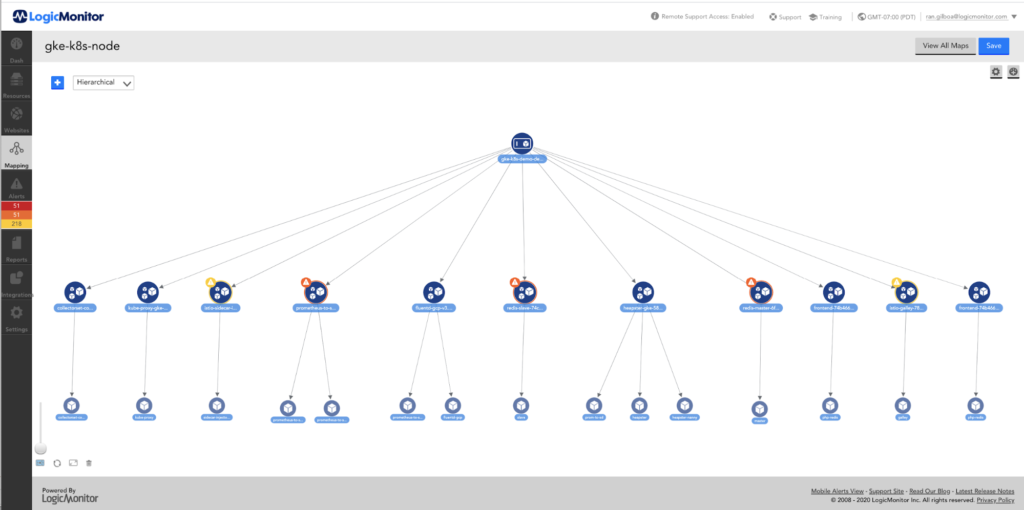

All of our microservices are monitored with LogicMonitor. Here is an example from the monitor dashboard. It shows the number of container replicas was automatically scaled up and down because of CPU utilization changes.

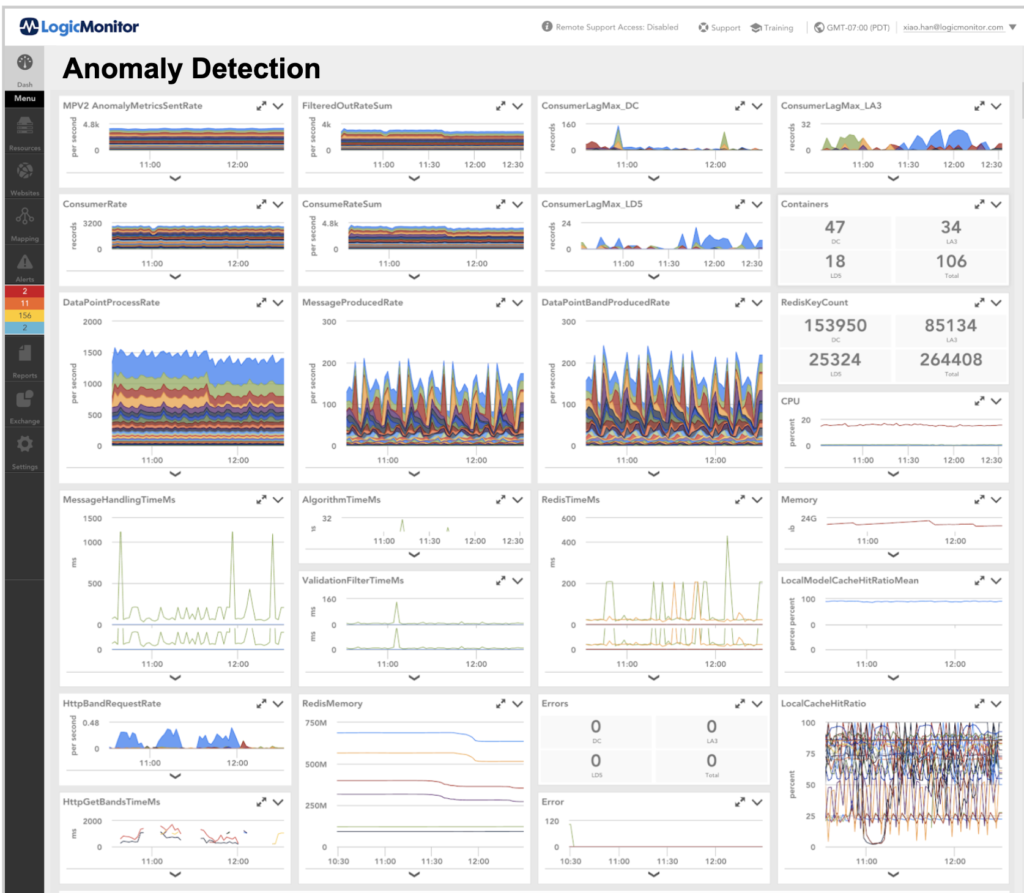

We use LogicMonitor for the microservice KPI monitoring. The KPI metrics include but are not limited to:

Here is an example of our Anomaly Detection microservice dashboard:

© LogicMonitor 2026 | All rights reserved. | All trademarks, trade names, service marks, and logos referenced herein belong to their respective companies.