Top 3 Things to Consider When Selecting a Log Analysis Platform

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

Get the latest blogs, whitepapers, eGuides, and more straight into your inbox.

Your video will begin shortly

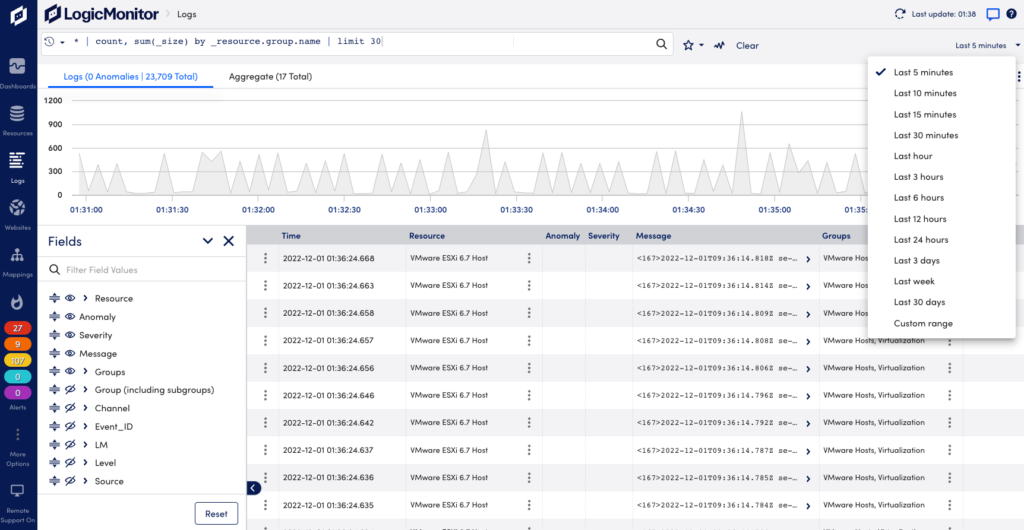

Effective log analysis can help you significantly reduce the time spent investigating and troubleshooting incidents. With the many different log analysis platforms available, it can be overwhelming to choose and difficult to know what to look for. In this short guide, we’ll share the top three things you should consider when selecting a log analysis platform for your business.

The sheer volume of log data can be extremely overwhelming, and make it impossible for IT teams to efficiently use logs to understand, troubleshoot, and track the changes that occur within their environment. From applications and web servers to network devices, everything in the modern IT infrastructure stack generates data and logs continuously.

Reviewing data efficiently to troubleshoot and proactively identify potentially catastrophic incidents is a crucial part of the IT Operations workflow. Manually searching through log data to find answers can unnecessarily extend troubleshooting times.

Finding a log analysis platform that automates log review and surfaces log data that may be relevant for troubleshooting can save your team valuable time and reduce mean time to repair.

Finding useful information in your logs is only half the battle. You need the right information at the right time, and in the right context, to effectively prevent or resolve an issue. You may normally start from a metric alert indicating that something is wrong and jump to analyzing logs to understand why that issue occurred. That jump often requires context switching, and each time you switch contexts you risk losing valuable information.

You should look for a log analysis platform that minimizes the need to context switch and integrates the different sources of information you rely on, such as metrics and logs, as seamlessly as possible. In addition to helping you troubleshoot faster, such a solution may also enable intelligent correlation between data sets, whereas siloed platforms may prevent such synergies.

It is important to understand how any monitoring platform’s alerting and thresholds work. As inefficient as it may sound, many platforms actually need you to specify the criteria for what constitutes an issue before it will alert for such an event. In most cases, this means that an incident has to occur first before thresholds are set and alert notifications are sent out. As IT infrastructure becomes more complex, the failure landscape is broader. Failure can occur anywhere from the network, to the cloud, to the applications, just to name a few possible failure points.

Look for a platform with intelligence that can detect and highlight anomalies in your log data for you, without requiring the overhead of pre-defining static log alert conditions to get any value. Such anomaly detection capabilities will save you time, and maximize the chance that you are notified when something does need your attention.

Furthermore, anomaly detection in logs can often catch symptoms or early warnings of issues before the issue actually occurs, and a notification for such a warning can give you the opportunity to act and prevent the issue from ever occurring in the first place.

Most log analysis platforms seem to compromise on at least one of the areas explained above: automation, intelligence, and context. LM Logs by LogicMonitor is different and offers a unique approach to log analysis centered on maximizing automation, intelligence, and context. If you are interested in learning more, reach out to your Customer Success Manager or sign up for a 14 day free trial of our logs platform!

© LogicMonitor 2026 | All rights reserved. | All trademarks, trade names, service marks, and logos referenced herein belong to their respective companies.