Almost every piece of an IT Infrastructure generates logs in some form. These log events from various resources arrive at the LM Logs ingestion endpoint for ingestion and further processing. During ingestion, information in the log lines is parsed to make it available for searching and data analysis.

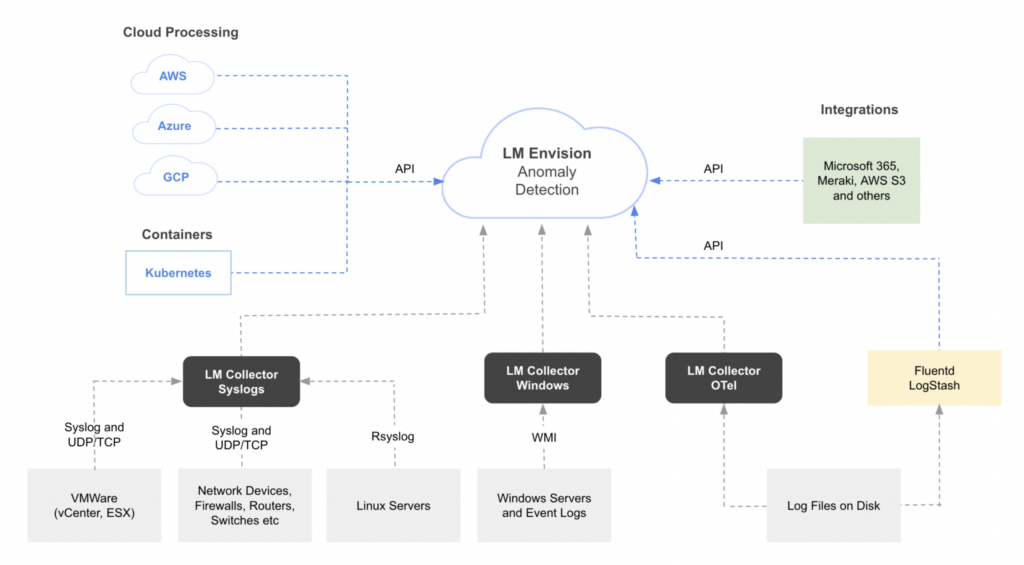

When setting up LM Logs, there are different ways of configuring resources and services to collect and send log data to LogicMonitor. Often an LM Collector is used, but you can also use the Logs REST API to send log events. The following shows examples of different log sources and methods for collecting and sending log data to LogicMonitor.

Sending Data to LM Logs

Resources must be configured to forward log data to LM Logs using one of various log data input methods. Because some methods are better suited for certain types of log data, choosing the right integration to send your data can improve how LogicMonitor processes the data and detects anomalies. For more information, see Log Anomaly Detection.

The various plugins and integrations offered by LogicMonitor for external systems merely help forward the log data. The integrations provide prewritten code that you can use in your environment to get your logs from wherever they are to the LogicMonitor API.

Log Input Options

Options for sending data to LogicMonitor depend on where the data is coming from:

- A resource, for example a host machine that generates log data.

- Log collectors or log servers.

- Cloud services.

- Other applications and technologies, including custom logs.

Available log data input options are described in the following.

LogSource

A LogSource provide templates that simplify configuration of log data collection and forwarding. LogSource is available for common log data sources like Syslog, Windows Events, and Kubernetes Events. A LogSource contains details about what logs to get, where to get them, and which fields should be parsed before sent to LM Logs for ingestion. For more information, see LogSource Overview.

Recommendation: LogSource is the recommended method to enable LM Logs. However, to use LogSource, the LM Collector must be version EA 31.200 or later.

The following provides an overview of options for collecting and sending log data from different datasources to LM Logs.

| Datasource | Description | Using LogSource | Other configuration options |

| Network devices, firewalls, routers, and switches. | Forward Syslog logs using standard UDP/TCP protocols. | LogSource for Syslog. See Syslog LogSource Configuration. | Configure the log collection. See Sending Syslog Logs. |

| Linux servers | Forward Syslog logs from Unix-based systems. | LogSource for Syslog. See Syslog LogSource Configuration. | Configure the log collection. See Sending Syslog Logs. |

| VMWare (vCenter, ESX) | Forward logs from VMWare hosts using the built-in vmsyslogd service. | LogSource for Syslog. See Syslog LogSource Configuration. | N/A |

| Windows Servers and Event Logs | Forward logs from Windows- based systems using WMI. | LogSource for Windows Event Logging. See Windows Event Logging LogSource Configuration. | Install and configure the Windows Events LM Logs DataSource. See Sending Windows Event Logs. |

| Cloud Services – Amazon Web Service (AWS) | Forward Amazon CloudWatch logs using a Lambda function configured to send log events. | N/A | Configure the collection and forwarding of AWS logs. See Sending AWS Logs. |

| Cloud Services – Microsoft Azure | Forward logs using an Azure function that consumes logs from an Event Hub. | N/A | Configure the collection and forwarding of Azure logs. See Sending Azure Logs. |

| Cloud Services – Google Cloud Platform (GCP) | Forward different types of application logs from various GCP resources. | N/A | Configure the collection and forwarding of GCP logs. See Sending GCP Logs. |

| Containers – Kubernetes | Forward logs from Kubernetes clusters, cluster groups, containerized applications, and pods. | LogSource for Kubernetes Event Logging. See Kubernetes Event Logging LogSource Configuration. LogSource for Kubernetes Pods. See Kubernetes Pods LogSource Configuration. | Install and configure the LM Kubernetes Monitoring Integration. The integration includes methods for logs for events, clusters, and pods. See Sending Kubernetes Logs and Events. |

| Log files on disk – application traces | Forward traces from instrumented applications. | LogSource for Log Files. See Log Files LogSource Configuration. | N/A |

| Log files on disk – LogStash events | Forward Logstash events to the LM Logs ingestion API. Can be used for most datasources. | LogSource for Log Files. See Log Files LogSource Configuration. | Install and configure the LM Logs Logstash plugin. See Sending Logstash Logs. |

| Log files on disk – any files | Forward logs from multiple sources, structure the data in JSON format, and forward to the LM Logs ingestion API. Can be used for most datasources. | LogSource for Log Files. See Log Files LogSource Configuration. | Install and configure the LM Logs Fluentd plugin. See Sending Fluentd Logs. |

| Custom logs | Forward custom logs directly to your LM account through the public API. Use this option if a log integration isn’t available, or you have custom logs you want to analyze. | LogSource for API Script (supports API filtering). See Script Logs LogSource Configuration. | Configure the log collection. See Sending Logs to Ingestion API. |

Recommendation: Ensure you use the available filtering options to remove logs that contain sensitive information so that they are not sent to LogicMonitor.

Deviceless Logs

In LM Logs, you can also view logs that come from the resources that are not being monitored by LogicMonitor. Logs that are not mapped to an LM-monitored resource (also called as “deviceless logs”) are still available to view and search. For such logs, the Resource and Resource Group fields are empty in the Logs page listing, and some functionalities may not be available such as log alerting and anomaly detection in certain situations.

Log Alerting for Deviceless Logs

You can create log processing pipelines for unmapped resources. Since there is no LM-monitored resource or resource group for these, LogicMonitor automatically associates the pipeline with a special resource and resource group. The resource name will be same as the pipeline name. The resource group for unmapped resources is called “LogPipelineResources”.

Note: The unmapped resource group LogPipelinesResources does not appear in the Resource tree. However, to assign permissions, this resource group appears at Settings > Users and Roles > User Access > Roles > Manage Roles.

Log alerts are created based on the alert conditions configured for the pipeline. You can see alerts for unmapped resources on the Alerts page. By default, log alerts for unmapped resources can only be seen by users with administrator access. You can change this by navigating to Settings > Users and Roles and assigning the desired permission to the LogPipelineResources group.

Anomaly Detection for Deviceless Logs

For deviceless logs, anomaly detection is based on the service (resource.service.name) and namespace (resource.service.namespace) keys. If these keys are not present in the ingested log event, anomaly detection will not be done. In case of Syslog, you can use Log Fields from LogSource to provide these values. For more information, see Syslog LogSource Configuration.

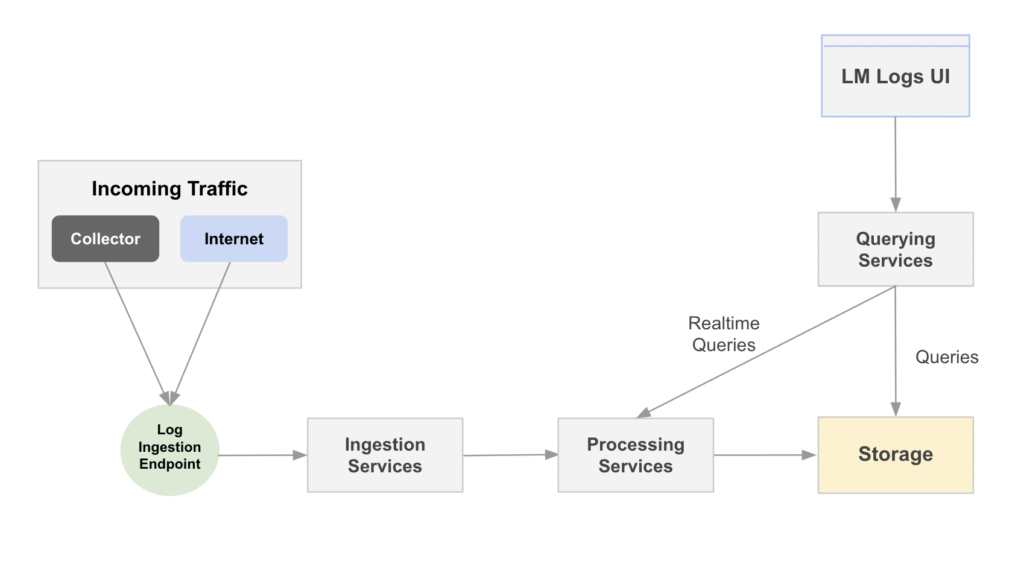

Log events are received through the LogIngest API, before being mapped to resources in LogicMonitor and further processed and stored.

The high-level process includes the following:

- Collection—Locally using agents, or sent by the data source.

- Ingestion—Sending the collected data to an ingestion layer.

- Storage—Storing logs as long as they are needed for compliance.

- Analysis—Through search or a live analysis system, and alert creation.

The log processing is described in more detail in the following.

Log Processing Flow

The log processing flow is explained in more detail in the following.

- Incoming Traffic—Log events are received from various resources. These can be a host machine, LM Log collectors or log servers, cloud services, or other applications and technologies. For more information, see LM Logs Overview.

- Log Ingestion Endpoint—Provides the API endpoint for sending events to LM Logs. Enriches logs with metadata, and forwards them to the log ingestion services for further processing.

- Ingestion Services—Receives log events as JSON payloads. Performs validation, authentication, authorization and resource mapping.

- Processing Services—Consumes ingested logs, applies anomaly detection algorithm, and prepares logs for storage. Triggers alerts based on pipeline and alert condition configurations.

- LM Logs UI—Receives user input such as log queries, added pipelines, and log usage.

- Querying Services—Processes queries received from the UI and sends saved queries for storage.

- Storage—Stores events and anomalies from log processing, and queries from querying services.

Log Processing Components

The following provides an overview of components involved in log ingestion and processing.

Logs Ingest Public API

The LogIngest API retrieves log events from different collection sources. You can also send logs directly to LM through the logs ingestion API. For more information, see Sending Logs to the LM Logs Ingestion API.

Log Ingestion

When setting up LM Logs, resources and services are configured to forward data to one of various log ingestion methods. For more information, see About Log Ingestion.

Log Anomaly Detection

Anomalies are changes in log data that fall outside of normal patterns. LM Logs detects anomalies based on parsed log event structures. For more information, see Log Anomaly Detection.

Log Processing Pipelines

Log events are channeled into pipelines analyzing structure patterns looking for anomalies. You can define filters and set alert conditions to track specific logs. For more information, see Log Processing Pipelines.

Log Alert Conditions

Log alerts are alert conditions based on log events and log processing pipelines. Alert conditions use regular expression patterns to match ingested logs and trigger LM alerts. For more information, see Log Alert Conditions.

Log Queries

The logs query language expands the standard search capabilities beyond keyword searches and filtering to narrow down information when troubleshooting. For more information, see Query Language Overview.

Log Retention

Log retention refers to the archiving of event logs, concerning the duration for which you store these log entries. For more information, see Data Retention.

Log Usage

Logs volume usage and ingestion quotas are collected and enriched by the log process, and retrieved from metadata for input to the logs usage reporting. For more information, see LM Logs Usage Monitoring.

Collectors

Collectors retrieves data from monitored resources and provide the data to the log ingestion. There several types of collectors for log ingestion. For more information, see About the LM Collector.

Alerts

Alerts are generated based on filters and conditions configured for resources. The overall Alerts page displays alerts across all monitored resources. For more information, see What does LM Alert on?.

LogicMonitor provides different methods for sending logs from a monitored Kubernetes cluster to LM Logs. The choice of method depends on the type of logs that you want to send. You can use any of the following methods to send logs from a monitored Kubernetes cluster to LM Logs.

- Using LogicMonitor Collector:

- Using LogSource: LogSource is the recommended method to enable LM Logs. To use LogSource, you must have EA Collector 31.200 or a later version installed on your machine. For more information, see Kubernetes Event Logging LogSource Configuration or contact your Customer Success Manager.

- Using agent.conf: For Kubernetes events and Pod logs, configure the LogicMonitor Collector to collect and forward the logs from a monitored cluster or cluster group. For more information, see Sending Kubernetes Events and Pod logs using LogicMonitor Collector.

- Using lm-logs Helm chart: For Kubernetes logs, use the lm-logs Helm chart configuration which is provided as part of the LogicMonitor Kubernetes integration. For more information, see Sending Kubernetes Logs using lm-logs Helm chart.

Requirements

- LogicMonitor API tokens to authenticate all requests to the log ingestion API.

- LogicMonitor Collector installed and monitoring your Kubernetes cluster.

Sending Kubernetes Logs using lm-logs Helm Chart

You can install and configure the LogicMonitor Kubernetes integration to forward your Kubernetes logs to the LM Logs ingestion API.

Deploying

The Kubernetes configuration for LM Logs is deployed as a Helm chart.

1. Add the LogicMonitor Helm repository:

helm repo add logicmonitor https://logicmonitor.github.io/k8s-helm-chartsIf you already have the LogicMonitor Helm repository, you should update it to get the latest charts:

helm repo update2. Install the lm-logs chart, filling in the required values:

helm install -n <namespace> \

--set lm_company_name="<lm_company_name>" \

--set lm_access_id="<lm_access_id>" \

--set lm_access_key="<lm_access_key>" \

lm-logs logicmonitor/lm-logsConfiguring Deviceless Logs for Kubernetes

Logs can be viewed in LM Logs even if the log is “deviceless” and not associated with an LM-monitored resource. Even without resource mapping, or when there are resource mapping issues, logs are still available for anomaly detection and to view and search.

For deviceless logs, log anomaly detection is done using the “namespace” and “service” fields instead of “Device ID”, when creating log profiles. To enable deviceless logs, set “fluent.device_less_logs” to “true”, when configuring lm-logs helmchart. For more information, see Send Kubernetes Logs to LM Logs.

Sending Kubernetes Events and Pod Logs using LogicMonitor Collector

You can configure the LogicMonitor Collector to receive and forward Kubernetes Cluster events and Pod logs from a monitored Kubernetes cluster or cluster group.

Note: Use the LM Container Chart services for comprehensive Kubernetes monitoring metrics, logs, and events. For more information, see Installing the LM Container Helm Chart.

Requirements

- EA Collector 30.100 or later installed.

- You have already deployed LogicMonitor’s Kubernetes Monitoring.

- Access to the resources (events or pods) that you want to collect logs from.

Enabling the Events and Logs Collection

The following are options for enabling events and logs collection:

- Recommended—Modify the Helm deployment for Argus to enable events collection.

For more information, see Kubernetes Events and Pod Logs Collection using LogicMonitor Collector. - Alternatively—Manually add the following properties to the monitored Kubernetes cluster group (or individual resources) in LogicMonitor.

| Property | Type | Default Value | Description |

| lmlogs.k8sevent.polling.interval.min | Integer | 1 | Polling interval in minutes for Kubernetes events collection. |

| lmlogs.k8spodlog.polling.interval.min | Integer | 1 | Polling interval in minutes for Kubernetes pod logs collection. |

| lmlogs.thread.count.for.k8s.pod.log.collection | Integer | 10 | The number of threads for Kubernetes pod logs collection. The maximum value is 50. |

Configuring Filters to Remove Logs

Note: Ensure you configure filters to remove log messages that contain sensitive information like credit cards, phone numbers, or personal identifiers so that these are not sent to LogicMonitor. You can also use filters to reduce the volume of non-essential syslog log messages sent to the logs ingestion API queue.

The filtering criteria for Kubernetes Events are based on the fields “message”, “reason”, and “type”. For Kubernetes pod logs, you can filter the message fields. Filtering criteria can be defined using keywords, a regular expression pattern, specific values of fields, and so on. To configure filter criteria, uncomment to enable and then edit the filtering entries in agent.conf.

For example:

- To filter out INFO level pod logs to LogicMonitor, uncomment or add the line: logsource.k8spodlog.filter.1.message.notcontain=INFO

- To send Kubernetes events of type=Normal, comment out the line: logsource.k8sevent.filter.1.type.notequal=Normal

For more information, see Kubernetes Events and Pod Logs Collection using LogicMonitor Collector.

To configure filter criteria, configure the following agent.conf entries as applicable.

Collector agent.conf configurations

| Property | Type | Description |

| logsource.k8sevent.filter.1.message.equal | String | Defines the contents of the message that equals to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.2.message.notequal | String | Defines the contents of the message that do not equal to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.3.message.contain | String | Defines the content of the message has value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.4.message.notcontain | String | Defines the content of the message that does not contain the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.5.message.regexmatch | String | Defines the contents of the message containing regular expression patterns to match the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.6.message.regexnotmatch | String | Defines the contents of the message containing regular expression patterns that do not match the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.7.reason.equal | String | Defines the contents of the reason equals to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.8.reason.notequal | String | Defines the contents of the reason does not equal to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.9.reason.contain | String | Defines the content of the message contains the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.10.reason.notcontain | String | Defines the content of the reason that does not contain the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.11.reason.regexmatch | String | Defines the contents of the reason containing regular expression patterns that match the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.12.reason.regexnotmatch | String | Defines the contents of the reason containing regular expression patterns that do not match the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.13.type.equal | String | Defines the contents of the type equals to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.14.type.notequal | String | Defines the contents of the type does not equal to the value provided in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.15.type.contain | String | Defines the content of the type that has value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.16.type.notcontain | String | Defines the content of the type do not contain the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.17.type.regexmatch | String | Defines the contents of the type containing regular expression patterns that match the value in the filter field for the Kubernetes events. |

| logsource.k8sevent.filter.18.type.regexnotmatch | String | Defines the contents of the type containing regular expression patterns that do not match the value in the filter field for the Kubernetes events. |

| logsource.k8spodlog.filter.1.message.equal | String | Defines the contents of the message equals to the value provided in the filter field for the Kubernetes pod logs. |

| logsource.k8spodlog.filter.2.message.notequal | String | Defines the contents of the message do not equal to the value provided in the filter field for the Kubernetes pod logs. |

| logsource.k8spodlog.filter.3.message.contain | String | Defines the content of the message that has the value in the filter field for the Kubernetes pod logs. |

| logsource.k8spodlog.filter.4.message.notcontain | String | Defines the content of the message that does not contain the value in the filter field for the Kubernetes pod logs. |

Helm-chart configurations

| Property | Description |

| lmlogs.k8sevent.enable=true | Sends events from pods, deployments, services, nodes, and so on to LM Logs. When false, ignores events. |

| lmlogs.k8spodlog.enable=true | Sends pod logs to LM Logs. When false, ignores logs from pods. |

Troubleshooting

Kubernetes Logs

- If you are not seeing Kubernetes logs in your LM Portal after a few minutes, it may be a resource mapping issue. Resource mapping for Kubernetes is handled by the Fluentd plugin.

- If mapping is correct, verify that the log file path is mounted. If the log file path is not mounted, edit the

/k8s-helm-charts/lm-logs/templates/deamonset.yamlfile to add the file path and volume.

For example, if the path to mount is/mnt/ephemeral/docker/containers/, make the following edits:

- Add the file path:

name: ephemeraldockercontainers

mountPath: /mnt/ephemeral/docker/containers/

readOnly: true- Add under volumes:

name: ephemeraldockercontainers

hostPath:

path: /mnt/ephemeral/docker/containers/Kubernetes Pod Logs

If you have enabled pod logs collection and forwarding, but you are not receiving pod logs in LM Logs, restart the Collector and increase the polling interval to 3-5 minutes.

Logstash is a popular open-source data collector which provides a unifying layer between different types of log inputs and outputs.

If you are already using Logstash to collect application and system logs, you can forward the log data to LogicMonitor using the LM Logs Logstash plugin.

This output plugin contains specific instructions for sending Logstash events to the LogicMonitor Log ingestion API. You can also install the Logstash Monitoring LogicModules for added visibility into your Logstash metrics alongside the logs.

Requirements

- A LogicMonitor account name.

- A LogicMonitor API token to authenticate all requests to the log ingestion API.

Installing the Plugin

Install the LM Logs Logstash plugin using Ruby Gems. Run the following command on your Logstash instance:

logstash-plugin install logstash-output-lmlogsConfiguring the Plugin

The following is an example of the minimum configuration needed for the Logstash plugin. You can add more settings into the configuration file. See the parameters tables in the following.

output {

lmlogs {

access_id => "access_id"

access_key => "access_key"

portal_name => "account-name"

portal_domain => "LM-portal-domain"

property_key => "hostname"

lm_property => "system.sysname"

}

}Note: The portal_domain is the domain of your LM portal. If it is not set, by default, it is set to logicmonitor.com. For example, if your LM portal URL is https://test.domain.com, portal_name should be set to test and portal_domain to domain.com. The supported domains are as follows:

- lmgov.us

- qa-lmgov.us

- logicmonitor.com

Including and Excluding Metadata

By default, all metadata is included in the logs sent to LM Logs. You can also use the include_metadata parameter to include or exclude metadata fields. Add this parameter to the logstash.config file. If set to false, metadata fields will not be sent to the LM Logs ingestion. Default value is “true”.

You can also exclude specific metadata by adding the following to the logstash.config file:

filter { mutate

{ remove_field => [ "[event][sequence]" ]}

}For more information, see the Logstash documentation.

Note: From version 1.1.0 of the Logstash plugin all metadata is included by default. The include_metadata parameter is introduced with version 1.2.0.

Required Parameters

| Name | Description | Default |

| access_id | Username to use for HTTP authentication. | N/A |

| access_key | Password to use for HTTP authentication. | N/A |

| portal_name | The LogicMonitor portal account name. | N/A |

Optional Parameters

| Name | Description | Default |

| batch_size | The number of events to send to LM Logs at one time. Increasing the batch size can increase throughput by reducing HTTP overhead. | 100 |

| keep_timestamp | If false, LM Logs will use the ingestion timestamp as the even timestamp. | true |

| lm_property | Specify the key that will be used by LogicMonitor to match a resource based on property. | system.hostname |

| message_key | The key in the Logstash event that will be used as the logs message. | message |

| property_key | They key in Logstash to find the hostname value, that will be used map to lm_property. | hostname |

| timestamp_is_key | If true, LM Logs will use a specified key as the event timestamp value. | false |

| timestamp_key | If timestamp_is_key=true, LM Logs will use this key in the event as the timestamp. Valid timestamp formats are ISO8601 strings or epoch in seconds, milliseconds, and nanoseconds. | logtimestamp |

| include_metadata | If false, metadata will not be included in the logs sent to LM Logs. | false |

Note: For more information about the message_key and property_key values syntax, see this Logstash documentation.

Building

Run the following command in Docker to build the Logstash plugin:

docker-compose run jruby gem build logstash-output-lmlogs.gemspecTroubleshooting

If you are not seeing logs in LM Logs:

- Ensure that the resource from which the logs are expected is being monitored.

- If the resource exists, check that the

lm_propertyused for mapping the resource is unique. Log ingestion will not work iflm_propertyis used for more than one resource.

Recommendation: If this is the first time you are configuring Windows Events log ingestion, use the LogSource template. LogSource contains details about which logs to get, where to get them, and which fields should be considered for parsing. For more information, see LogSource Configuration.

The Windows_Events_LMLogs DataSource retrieves the logs using Windows Management Instrumentation (WMI) and pushes them to LM Logs using a BatchScript collection method. The log data is added to the metric payload and polled every 60 seconds, with a batch limit of 5000. If it exceeds 5000, DataSource sends the logs in batches of 5000 events. Because of this, there is no collector setup needed for Windows Event Log setup.

Recommendation: Because there is no LM Collector setup needed, you should review the health of the LM Collectors monitoring your Windows servers.

Note: Batching the events should not alter the timestamps of the events when they are received. The timestamps viewed in LM Logs are the Windows Event Timestamp.

When you initially set up DataSource, it pre-parses the following metadata fields:

- EventID

- EventType

Note: Severity level “Critical” is not supported. LogSource only supports Error, Warning, Information, Success Audit, and Failure Audit event types. For more information, see Event Types from Microsoft. - Channel Name

Recommendation: If you set up multiple DataSource configurations, you will receive duplicate logs. If this occurs, delete the other DataSource.

Required Properties to Activate a DataSource Configuration

| Property | Description |

lmaccess.id | LogicMonitor logs ingestion API access ID |

lmaccess.key | LogicMonitor logs ingestion API access key |

lmlogs.winevent.channels | You must specify the Windows Events channels within this property. This contains the list of log files that you want to send to LM Logs, comma separated and with no spaces. For example, you can use the following:

|

Note: lmaccess.id and lmaccess.key are LogicMonitor API Tokens that must have permissions to send logs to LM Logs.

Requirements for Ingesting Windows Event Logs

To ingest Windows Event Logs, you need the following:

- A LogicMonitor LMV1 API token, which is a key-based authentication that allows you to authenticate API calls to the LogicMonitor platform. It uses a key pair that consists of the Access ID and Access Key. If you have not created a LogicMonitor API token, see Adding an API Token for details.

- Windows servers as a managed resource. Your Windows servers must exist in LM as a managed resource and exist in the resource tree. This allows for easy ingestion since LogicMonitor will already have the necessary WMI credentials to pull the Windows Event logs.

- The Windows_Events_LMLogs DataSource installed. This LogicModule is available in your LogicMonitor portal. Navigate to Modules and search for the Windows_Events_LMLogs DataSource. For more information about installing the module, see Module Installation.

- Designated log file names for logs sent to LogicMonitor.

- The following API properties identified:

- lmaccess.id

- lmaccess.key

- lmlogs.winevent.channels.

For more information, see the Required Properties to Activate a DataSource configuration.

Note: Some event logs may not be automatically recognized by LogicMonitor. You must create them in a Windows Registry if this happens. For more information, see Eventlog Key from Windows.

Configuring the Windows Events DataSource to Ingest Windows Event Logs

Recommendation: When configuring the DataSource, exclude the security audit success log level. This log level creates a high volume of logs and generally does not add significant value for troubleshooting purposes.

- Use the existing Windows_Events_LMLogs DataSource, or create the Windows Event DataSource.

- In LogicMonitor, select Resource Tree. Navigate to the Windows resource you want to ingest logs from.

- Select Manage Properties

and add the properties in Required Properties to Activate a DataSource configuration.

and add the properties in Required Properties to Activate a DataSource configuration.

After the properties are applied for the DataSource, the Windows Events for each of the specified Channels are pushed to LM Logs. Navigate to Resources to see the Channels listed as discovered instances for Windows_Events_LMLogs.

When viewing the graphs for the instances, the LM Logs API response codes only return data on the instance corresponding to the first channel listed in the device property. This ensures that response codes trigger a single alert, rather than one for each DataSource instance. This is because the DataSource makes one API request for all instances together instead of individually.

The DataSource is configured to trigger a Warning alert if the Response Code is greater than 207.

Recommendation: If this is the first time you are configuring Windows Events log ingestion, use the LogSource template. LogSource contains details about which logs to get, where to get them, and which fields should be considered for parsing. For more information, see LogSource Configuration.

The Windows_Events_LMLogs DataSource retrieves the logs using Windows Management Instrumentation (WMI) and pushes them to LM Logs using a BatchScript collection method. The log data is added to the metric payload and polled every 60 seconds, with a batch limit of 5000. If it exceeds 5000, DataSource sends the logs in batches of 5000 events. Because of this, there is no collector setup needed for Windows Event Log setup.

Recommendation: Because there is no LM Collector setup needed, you should review the health of the LM Collectors monitoring your Windows servers.

Note: Batching the events should not alter the timestamps of the events when they are received. The timestamps viewed in LM Logs are the Windows Event Timestamp.

When you initially set up DataSource, it pre-parses the following metadata fields:

- EventID

- EventType

Note: Severity level “Critical” is not supported. LogSource only supports Error, Warning, Information, Success Audit, and Failure Audit event types. For more information, see Event Types from Microsoft. - Channel Name

Recommendation: If you set up multiple DataSource configurations, you will receive duplicate logs. If this occurs, delete the other DataSource.

Required Properties to Activate a DataSource Configuration

| Property | Description |

lmaccess.id | LogicMonitor logs ingestion API access ID |

lmaccess.key | LogicMonitor logs ingestion API access key |

lmlogs.winevent.channels | You must specify the Windows Events channels within this property. This contains the list of log files that you want to send to LM Logs, comma separated and with no spaces. For example, you can use the following:

|

Note: lmaccess.id and lmaccess.key are LogicMonitor API Tokens that must have permissions to send logs to LM Logs.

Requirements for Ingesting Windows Event Logs

To ingest Windows Event Logs, you need the following:

- A LogicMonitor LMV1 API token, which is a key-based authentication that allows you to authenticate API calls to the LogicMonitor platform. It uses a key pair that consists of the Access ID and Access Key. If you have not created a LogicMonitor API token, see Adding an API Token for details.

- Windows servers as a managed resource. Your Windows servers must exist in LM as a managed resource and exist in the resource tree. This allows for easy ingestion since LogicMonitor will already have the necessary WMI credentials to pull the Windows Event logs.

- The Windows_Events_LMLogs DataSource installed. This LogicModule is available in your LogicMonitor portal. Navigate to Modules and search for the Windows_Events_LMLogs DataSource. For more information about installing the module, see Module Installation.

- Designated log file names for logs sent to LogicMonitor.

- The following API properties identified:

- lmaccess.id

- lmaccess.key

- lmlogs.winevent.channels.

For more information, see the Required Properties to Activate a DataSource configuration.

Note: Some event logs may not be automatically recognized by LogicMonitor. You must create them in a Windows Registry if this happens. For more information, see Eventlog Key from Windows.

Configuring the Windows Events DataSource to Ingest Windows Event Logs

Recommendation: When configuring the DataSource, exclude the security audit success log level. This log level creates a high volume of logs and generally does not add significant value for troubleshooting purposes.

- Use the existing Windows_Events_LMLogs DataSource, or create the Windows Event DataSource.

- In LogicMonitor, select Resource Tree. Navigate to the Windows resource you want to ingest logs from.

- Select Manage Properties

and add the properties in Required Properties to Activate a DataSource configuration.

and add the properties in Required Properties to Activate a DataSource configuration.

After the properties are applied for the DataSource, the Windows Events for each of the specified Channels are pushed to LM Logs. Navigate to Resources to see the Channels listed as discovered instances for Windows_Events_LMLogs.

When viewing the graphs for the instances, the LM Logs API response codes only return data on the instance corresponding to the first channel listed in the device property. This ensures that response codes trigger a single alert, rather than one for each DataSource instance. This is because the DataSource makes one API request for all instances together instead of individually.

The DataSource is configured to trigger a Warning alert if the Response Code is greater than 207.

Fluentd is an open-source data collector which provides a unifying layer between different types of log inputs and outputs. Fluentd can collect logs from multiple sources, and structure the data in JSON format. This allows for a unified log data processing including collecting, filtering, buffering, and outputting logs across multiple sources and destinations.

If you are already using Fluentd to collect application and system logs, you can forward the logs to LogicMonitor using the LM Logs Fluentd plugin. This provides a LogicMonitor-developed gem that contains the specific instructions for sending logs to LogicMonitor.

Requirements

- A LogicMonitor account name.

- A LogicMonitor API token to authenticate all requests to the log ingestion API.

Installing the Plugin

Add the plugin to your Fluentd instance using one of the following options:

- With gem – if you have td-agent/fluentd installed along with native Ruby:

gem install fluent-plugin-lm-logs - For native td-agent/fluentd plugin handling:

td-agent-gem install fluent-plugin-lm-logs - Alternatively, you can add

out_lm.rbto your Fluentd plugins directory.

Configuring the Plugin

In this step you specify which logs should be forwarded to LogicMonitor.

Create a custom fluent.conf file, or edit the existing one, and add the following to the Fluentd configuration. Properties are explained in the following.

# Match events tagged with "lm.**" and

# send them to LogicMonitor

<match lm.**>

@type lm

resource_mapping {"<event_key>": "<lm_property>"}

company_name <lm_company_name>

access_id <lm_access_id>

access_key <lm_access_key>

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8m

</buffer>

debug false

compression gzip

</match>Configuration Properties

| Property | Description |

| company_name | Your LogicMonitor company or account name in the target URL: https://<account>.logicmonitor.com |

| resource_mapping | The mapping that defines the source of the log event to the LogicMonitor resource. In this case, the <event_key> in the incoming event is mapped to the value of <lm_property>. |

| access_id | The LogicMonitor API tokens access ID. It is recommended to create an API-only user. See API Tokens. |

| access_key | The LogicMonitor API tokens access key. See API Tokens. |

| flush_interval | Defines the time in seconds to wait before sending batches of logs to LogicMonitor. Default is 60s. |

| chunk_limit_size | Defines the size limit in mbs for a collected logs chunk before sending the batch to LogicMonitor. Default is 8MB. |

| flush_thread_count | Defines the number of parallel batches of logs to send to LogicMonitor. Default is 1. Using multiple threads can hide the IO/network latency, but does not improve the processing performance. |

| debug | When true, logs more information to the Fluentd console. |

| force_encoding | Specify charset when logs contain invalid utf-8 characters. |

| include_metadata | When true, appends additional metadata to the log. default false. |

| compression | Enable compression for incoming events. Currently supports gzip encoding. |

Request Example

Example of request sent:

curl http://localhost:8888/lm.test -X POST -d 'json={"message":"hello LogicMonitor from fluentd", "event_key":"lm_property_value"}'Event returned:

{

"message": "hello LogicMonitor from fluentd"

}Mapping Resources

It is important that the sources generating the log data are mapped using the right format, so that logs are parsed correctly when sent to LogicMonitor Logs.

When defining the source mapping for the Fluentd event, the <event_key> in the incoming event is mapped to the LogicMonitor resource, which is the value of <lm_property>.

For example, you can map a “hostname" field in the log event to the LogicMonitor property “system.hostname" using:

resource_mapping {"hostname": 'system.hostname"}If the LogicMonitor resource mapping is known, the event_key property can be overridden by specifying _lm.resourceId in each record.

Configuration Examples

The following are examples of resource mapping.

Mapping with _lm.resourceID

In this example, all incoming records that match lm.** will go through the filter. The specified _lm.resourceId mapping is added before it is sent to LogicMonitor.

<filter lm.**>

@type record_transformer

<record>

_lm.resourceId { "system.aws.arn": "arn:aws:ec2:us-west-1:xxx:instance/i-xxx"}

tag ${tag}

</record>

</filter>Mapping Kubernetes Logs

For Kubernetes logs in Fluentd, the resource mapping can be defined with this statement:

resource_mapping {"kubernetes.pod_name": "auto.name"}Mapping a Log File Resource

If you want to only send logs with a specific tag to LogicMonitor, change the Regex pattern from ** to the specific tag. In this example the tag is “lm.service”.

#Tail one or more log files

<source>

@type tail

<parse>

@type none

</parse>

path /path/to/file

tag lm.service

</source>

# send all logs to Logicmonitor

<match **>

@type lm

resource_mapping {"Computer": "system.hostname"}

company_name LM_COMPANY_NAME

access_id LM_ACCESS_ID

access_key LM_ACCESS_KEY

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8MB

</buffer>

debug false

</match>Parsing a Log File

In some cases you might be tailing a file for logs. Here it is important to parse the log lines so that fields are correctly populated with timestamp, host, log message and so on. The following example shows how to configure the source for this.

#Tail one or more log files

<source>

@type tail

<parse>

@type none # this will send log-lines as it is without parsing

</parse>

path /path/to/file

tag lm.service

</source>There are many parsers available for example for Fluentd. You can install a parser plugin using gem install fluent-plugin-lm-logs. For more information, see the Fluentd documentation.

Transforming a Log Record

Logs that are read by source might not have all the metadata needed. Through a filter plugin you can modify the logs before writing them to LogicMonitor. Add the following block to the configuration file.

# records are filtered against a tag

<filter lm.filter>

@type record_transformer

<record>

system.hostname "#{Socket.gethostname}"

service "lm.demo.service"

</record>

</filter>You can add more advance filtering, and write a Ruby code block with record_transformer. For better performance, you can use record_modifier. For more information, see the Fluentd documentation.

More Examples

Fluentd provides a unified logging layer which can be used for collecting many types of logs that can be forwarded to LogicMonitor for analysis. The following lists additional sources of configuration samples.

- For sample configurations for popular log sources, see Logs Fluentd Examples (GitHub).

- For more available Fluentd plugins, see the Fluentd documentation.

Tuning Performance

In some cases you might need to fine-tune the configuration to optimize the Fluentd performance and resource usage. For example, if the log input speed is faster than the log forwarding, the batches will accumulate. You can prevent this by adjusting the buffer configuration.

Buffer plugins are used to store incoming stream temporally before transmitting. For more information, see the Fluentd documentation.

There are these types of buffer plugins:

- memory (buf_memory). Uses memory to store buffer chunks.

- file (buf_file). Uses files to store buffer chunks on disk.

In the following configuration example, Fluentd creates chunks of logs of 8MB (_chunk_limit_size), and sends them to LogicMonitor every 1 second (flush_interval). Note that even if 8MB is the upper limit, the chuncks will be sent every 1 second even if their size is smaller than 8MB.

<match lm.**>

@type lm

company_name LM_COMPANY_NAME

access_id LM_ACCESS_ID

access_key LM_ACCESS_KEY

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8MB

</buffer>

debug false

</match>Adjusting Rate of Incoming Logs

If the log input speed is faster than the log forwarding, the batches will accumulate. If you use the memory-based buffer, the log chunks are kept in the memory, and memory usage will increase. To prevent this, you can have multiple parallel threads for the flushing.

Update the buffer configuration as described in the following. Adding flush_thread_count 8 increases the output rate 8 times.

<match lm.**>

@type lm

company_name LM_COMPANY_NAME

access_id LM_ACCESS_ID

access_key LM_ACCESS_KEY

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8MB

flush_thread_count 8

</buffer>

debug false

</match>Using File-Based Buffering

If you have an upper limit for parallel thread processing, and have a spike in the incoming log rate, you can use file-based buffering instead. To use this, change @type memory to @type file in the buffer configuration block. Note that this may result in increased I/O operations.

Troubleshooting

Enable debug logging by setting the debug property to “true” in fluent.conf to see additional information in the Fluentd console. The following describes some common troubleshooting scenarios when using Fluentd. For more information on logs troubleshooting, see Troubleshooting.

Investigating td-agent Logs

When troubleshooting, look for the log file td-agent.log located in the parent directory of td-agent, for example c:\opt\td-agent.

The following is an example of a td-agent.conf file.

<source>

@type tail

path FILE_PATH

pos_file C:\opt\td-agent\teamaccess.pos

tag collector.teamaccess

<parse>

@type none

</parse>

</source>

<filter collector.**>

@type record_transformer

<record>

#computer_name ${hostname}

computer_name "<removed>"

</record>

</filter>

<match collector.**>

@type lm

company_name <COMPANY_NAME>

access_id <ACCESS_ID>

access_key <ACCESS_KEY>

resource_mapping {"computer_name": "system.sysname"}

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8MB

</buffer>

debug false

</match>For more information, see this Fluentd documentation.

Delayed Ingestion for Multi-Line Events

For multi-line events, log ingestion might be delayed until the next log entry is created. This delay occurs because Fluentd will only parse the last line when a line break is appended at the end of the line. To fix this, add or increase the configuration property multiline_flush_interval (in seconds) in fluent.conf.

Resource Mapping Failures

In these cases Fluentd appears to be working but logs do not appear in LogicMonitor. This is most likely caused by incorrect or missing resource mappings.

By default fluent-plugin-lm looks for “host” and “hostname” in a log record coming from Fluentd. The plugin tries to map the record to a device with the same value for the “system.hostname” property in LogicMonitor. The resource to be mapped to must be uniqely identifiable with “system.hostname” having the same value as “host” / “hostname” in the log.

The following are examples of host/hostname mappings.

Example 1

Configuration:

resource_mapping {"hostname": "system.sysname"}Result:

if log : { "message" : "message", "host": "55.10.10.1", "timestamp" : ..........}The log will be mapped against a resource in LM which is uniquely identifiable by property system.sysname = 55.10.10.1

Example 2

Mapping with multiple properties where devices are uniquely identifiable.

Configuration:

resource_mapping {"hostname": "system.sysname", "key_in_log" : "Property_key_in_lm"}Result:

if log : { "message" : "message", "host": "55.10.10.1", "key_in_log": "value", "timestamp" : ..........}The log will be mapped against a device in LogicMonitor which is uniquely identifiable by property system.sysname = 55.10.10.1 and Property_key_in_lm= value

Example 3

Hard coded resource mapping of all logs to one resource. The resource has to be uniquely identifiable by the properties used.

Configuration:

#Tail one or more log files

<source>

.....

</source>

#force resource mapping to single device with record transformer

<filter lm.**>

@type record_transformer

<record>

_lm.resourceId {"system.hostname" : "11.2.3.4", "system.region" : "north-east-1", "system.devicetype" : "8"}

tag lm.filter

</record>

</filter>

# send all logs to Logicmonitor

<match lm.**>

@type lm

company_name LM_COMPANY_NAME

access_id LM_ACCESS_ID

access_key LM_ACCESS_KEY

<buffer>

@type memory

flush_interval 1s

chunk_limit_size 8MB

</buffer>

debug false

</match>Result:

Logs with tag lm.** will be mapped with a resource uniquely identified with property :

system.hostname = 11.2.3.4

system.region = north-east-1

system.devicetype = 8

Windows and Wildcards in File Paths

Due to internal limitations, backslash (\) in combination with wildcard (*) does not work for file paths in Windows. To avoid errors caused by this limitation, use a forward slash (/) instead when adding file paths in the td-agent.conf file.

Example: Use …/logs/filename.*.log instead of …\logs\filename.*.log

For more information, see this Fluentd documentation.

The following describes how to send logs from Google Cloud Platform (GCP) to LM Logs for analysis.

Requirements

LogicMonitor API tokens to authenticate all requests to the log ingestion API.

Supported GCP Logs and Resources

LM Logs supports the following resources and log types:

- GCP audit logs

- GCP Cloud Composer logs

- GCP Cloud Function logs

- GCP Cloud Run logs

- GCP CloudSQL logs

- Virtual Machine (VM) instance logs

Installation Instructions

1. On your Google Cloud account, select Activate Cloud Shell. This opens the Cloud Shell Terminal below the workspace.

2. In the Terminal, run the following commands to select the project.

gcloud config set project [PROJECT_ID]3. Run the following command to install the integration:

source <(curl -s https://raw.githubusercontent.com/logicmonitor/lm-logs-gcp/master/script/gcp.sh) && deploy_lm-logsInstalling the integration creates these resources:

- A PubSub topic named

export-logs-to-logicmonitorand a pull subscription. - A Virtual Machine (VM) named

lm-logs-forwarder.

Note: You will be prompted to confirm the region where the VM is deployed. This should already be configured within your project.

Configuring the Log Forwarder

1. After the installation script completes, navigate to the Compute Engine > VM Instances and select lm-logs-forwarder.

2. Under Remote access, select SSH.

3. SSH into the VM (lm-logs-forwarder) and run the following command, filling in the values:

export GCP_PROJECT_ID="GCP_PROJECT_ID"

export LM_COMPANY_NAME="LM_COMPANY_NAME"

export LM_COMPANY_DOMAIN="${LM_COMPANY_DOMAIN}"

export LM_ACCESS_ID="LM_ACCESS_ID"

export LM_ACCESS_KEY="LM_ACCESS_KEY"

source <(curl -s https://raw.githubusercontent.com/logicmonitor/lm-logs-gcp/master/script/vm.sh)Note: If LM_COMPANY_DOMAIN is not provided or is set as empty string, by default, it is set to “logicmonitor.com”. The supported domains for this variable are as follows:

- lmgov.us

- qa-lmgov.us

- logicmonitor.com

Exporting Logs from Logging to PubSub

You need to create a sink from Logging to the PubSub topic export-logs-to-logicmonitor (created at installation).

1. In the Logging page, filter the logs that you want to export.

Recommendation: Use the filters to remove logs that contain sensitive information so that they are not sent to LogicMonitor.

2. Select Actions > Create sink and under Sink details, provide a name.

3. Under Sink destination, choose Cloud Pub/Sub as the destination and select export-logs-to-logicmonitor. The pub/sub can be located in a different project.

4. Select Create sink. If there are no issues, you should see the logs stream into the LM Logs page.

Removing the Integration

Run the following command to delete the integration and all its resources:

source <(curl -s https://raw.githubusercontent.com/logicmonitor/lm-logs-gcp/master/script/gcp.sh) && delete_lm-logsDefault Metadata for Logs

The following metadata is added by default to logs along with the raw message string.

| Metadata key | Description |

| severity | Severity level for the event. Must be one of “Informational”, “Warning”, “Error”, or “Critical”. |

| logName | The resource name of the log to which this event belongs. |

| category | Log category for the event. Typical log categories are “Audit”, “Operational”, “Execution”, and “Request”. |

| _type | Service, application, or device/virtual machine responsible for creating the event. |

| labels | Labels for the event. |

| resource.labels | Labels associated with the resource to which the event belongs. |

| httpRequest | The HTTP request associated with the log entry, if any. |

Additional Metadata

If you need additional metadata, use the following configuration for plugin fluent-plugin-lm-logs-gcp.

Add the following block in fluentd/td-agent config file.

<filter pubsub.publish>

@type gcplm

metadata_keys severity, logName, labels, resource.type, resource.labels, httpRequest, trace, spanId, custom_key1, custom_key2

use_default_severity true

</filter>To add static metadata, use the record transformer. Add the following block to fluentd.conf for the static metadata.

<filter pubsub.publish>

@type record_transformer

<record>

some_key some_value

tag ${tag} # can add dynamic data as well

</record>

</filter>For more information about the configurations for the plugin, see lm-logs-fluentd-gcp-filter on github.

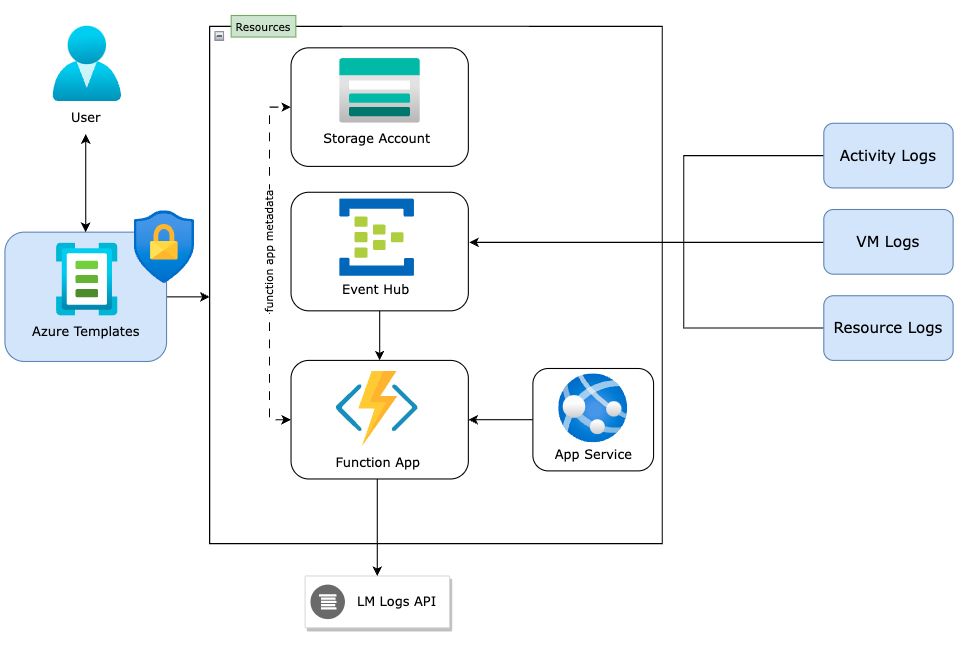

The Microsoft Azure integration for LM Logs is implemented as an Azure Function that consumes logs from an Event Hub and sends the logs to the LogicMonitor logs ingestion API. The following describes options for setting up the forwarding of Azure logs.

Requirements

- An Azure Cloud Account created in your LogicMonitor portal.

- LogicMonitor API tokens to authenticate all requests to the log ingestion API.

- The Azure CLI tools installed on the machines that will forward logs.

- A “User Administrator” role in Azure to create the managed identity which will access the Azure resources logs.

- Azure devices can only send logs to the Event Hubs within the same region. Each Azure region requires a separate Azure Function deployment.

Azure Templates

You can use the Azure Templates provided by LogicMonitor to do the following:

- Configure and deploy the Azure resources required to forward activity logs.

- Create a managed identity to access the Azure resources logs.

- Forward the logs to the LM Logs API.

Deploying Resources

The Azure Templates deploy a resource group named lm-logs-{LM_Company_name}-{resource_group_region}-group. The group has the following resources:

- Event Hub

- Azure Function

- Storage Account

- App Service plan

Once the Azure Function and Event Hub are deployed, the Azure Function listens for logs from the Event Hub.

Select the Deploy to Azure button below to open the Azure Template and run the deployment.

When deploying, you need to provide the following details in the template:

| Parameter | Description |

Region | (Required) The location to store the deployment metadata. Predefined in Azure but you can change the value. For a list of Azure regions by their display names, see Azure geographies. |

resource_group_region | (Required) Enter the region where you want to create the resource group and deploy resources like Event Hub, Function app, and so on. For a list of the Azure regions in plain text, run the following command from PowerShell with the Azure CLI tools installed: az account list-locations -o table |

LM_Company_name | (Required) Your LogicMonitor company or account name in the target URL. This is only the <account> value, not the fully qualified domain name (FQDN). Example: https://<account>.logicmonitor.com |

LM_Domain_Name | The domain of your LM portal. By default, it is set to "logicmonitor.com". The supported domains for this variable are as follows:– lmgov.us– qa-lmgov.us– logicmonitor.com |

LM_Access_Id | (Required) The LM API tokens access ID. You should use an API-only user for this integration. |

LM_Access_Key | (Required) The LM API tokens access key. |

Azure_Client_Id | (Required) The Application (client) ID used while creating the Azure Cloud Account in your LogicMonitor portal. Note: This ID should have been created when you connected the Azure Cloud Account. The ID can be found in the Azure Active Directory under App Registrations. |

Enable Activity Logs | (Optional) Specify whether or not to send Activity Logs to the Event Hub created with the Azure Function. Can be “Yes” (default) or “No”. |

Azure_Account_Name | (Optional) Use this field to establish mapping between the Azure account logs and the Cloud account resource. The Azure Account name can be retrieved from the system.displayname field in the Cloud Account Info tab. |

LM_Bearer_Token | (Optional) LM API Bearer Token. Use either access_id and access_key both or bearer_token. If all the parameters are provided, LMv1 token (access_id and access_key) is used for authentication with LogicMonitor. |

Include_Metadata_keys | Comma separated keys to add as event metadata in a lm-log event. Specify ‘.’ for nested JSON (for example – properties.functionName,properties.message) |

LM Tenant Id | LogicMonitor Tenant Identifier is sent as event metadata to LogicMonitor. |

TLSVersionStorageAccount (TLS Version Storage Account) | Specify the TLS version for storage account in the format x_x. Example 1.2 is provided as 1_2. The default is 1_2. |

TLSVersionFunctionApp (TLS Version Function App) | Specify the TLS version for function app in the format X.X. The default is 1.3. |

If the deployment is successful and Enabled Activity Logs is set to “Yes”, logs should appear in the LM Logs page. These logs will be mapped to the Azure Cloud Account created in the LogicMonitor portal. If logs are not being forwarded, see Enabling Debug Logging.

If Enable Activity Logs was set to “No”, you need to manually configure forwarding of logs to the Event Hub. LogicMonitor also provides templates for this. See Sending Azure Resource Logs in the following.

Sending Azure Resource Logs

Once the Azure Function and Event Hub are deployed, Azure Function listens for logs from the Event Hub. The next step is to configure your resources and resource groups to send their logs to the Event Hub. For most Azure resources, this can be done by updating their diagnostic settings.

Without the managed identity, you can still manually configure diagnostics settings of Azure resources to forward their logs to the Event Hub. This is only required if you are forwarding resource logs with the template provided.

Creating a Managed Identity

Select the Deploy to Azure button below to open the Azure Template and run the deployment. This creates the Managed Identity with the User Administrator role.

When deploying, you need to provide the following details in the template:

| Parameter | Description |

resource_group_region | (Required) The region where you created the resource group and deployed resources like the Event Hub, Function app, and so on. For a list of the Azure regions in plain text, run the following command from PowerShell with the Azure CLI tools installed: az account list-locations -o tableNote: The resource group and the resources within it must be in the same region as that of the Event Hub created when you deployed the Azure Function. |

LM_Company_name | (Required) Your LogicMonitor company or account name in the target URL. This is only the <account> value, not the fully qualified domain name (FQDN). Example: https://<account>.logicmonitor.com |

Updating Diagnostic Settings

Select the Deploy to Azure button below to open the Azure Template and run the deployment. This lets you configure resource level log forwarding to the Event Hub. This template updates the diagnostic settings of selected resources in the resource group.

When deploying, you need to provide the following details in the template:

| Parameter | Description |

Resource Group | (Required) The resource group from where you want to forward logs to the Event Hub. For a list of Azure regions by their display names, see Azure geographies. |

Subscription ID | (Required) The ID for the subscription which consists of all the resource groups. |

LM_Company_name | (Required) Your LogicMonitor company or account name in the target URL. This is only the <account> value, not the fully qualified domain name (FQDN). Example: https://<account>.logicmonitor.com |

Force Update Tag | (Optional) Changing this value between template deployments forces the deployment script to re-execute. |

Deployment Location | (Required) Select the region where this deployment is configured. |

Note: While this deployment is running, you can see the deployment logs in the script that gets created in the resource group. Example: “lm-logs-{LM_Company_name}-{resource_group_region}-group_script”.

Sending Virtual Machine Logs

For virtual machines (VMs), the diagnostic settings will not be updated through the described template deployments. To forward system and application logs from VMs, you need to install and configure diagnostic extensions on the VMs. The following describes how to set up the log forwarding for Linux and Windows VMs.

Sending Linux VM Logs

Do the following to forward system and application logs from Linux VMs:

1. Install the diagnostic extension for Linux on the VM.

2. Install the Azure CLI.

3. Sign in to Azure using the Azure CLI: az login

4. Download the configuration script using the following command:

wget https://raw.githubusercontent.com/logicmonitor/lm-logs-azure/master/vm-config/configure-lad.sh5. Run the configuration to create the storage account and configuration files needed by the diagnostic extension:

./configure-lad.sh <LM company name>6. Update lad_public_settings.json to configure types of system logs and their levels (syslogEvents) and application logs (filelogs) to collect.

7. Run the following command to configure the extension:

az vm extension set --publisher Microsoft.Azure.Diagnostics --name LinuxDiagnostic --version 3.0 --resource-group <your VM's Resource Group name> --vm-name <your VM name> --protected-settings lad_protected_settings.json --settings lad_public_settings.jsonNote: The exact command will be printed by the configure-lad.sh script.

Sending Windows VM Logs

Do the following to forward system and application logs from Windows VMs:

1. Install the diagnostic extension for Windows on the VM.

2. Install the Azure CLI using PowerShell:

Invoke-WebRequest -Uri https://aka.ms/installazurecliwindows -OutFile .\AzureCLI.msi; Start-Process msiexec.exe -Wait -ArgumentList '/I AzureCLI.msi /quiet'; rm .\AzureCLI.msi3. Sign in to Azure using the Azure CLI: az login

4. Download the configuration script using the following command:

Invoke-WebRequest -Uri https://raw.githubusercontent.com/logicmonitor/lm-logs-azure/master/vm-config/configure-wad.ps1 -OutFile .\configure-wad.ps15. Run the configuration to create the storage account and configuration files needed by the diagnostic extension:

.\configure-wad.ps1 -lm_company_name <LM company name>6. Update wad_public_settings.json to configure types of event logs (Application, System, Setup, Security, and so on) and their levels (Info, Warning, Critical) to collect.

7. Run the following command to configure the extension:

az vm extension set --publisher Microsoft.Azure.Diagnostics --name IaaSDiagnostics --version 1.18 --resource-group <your VM's Resource Group name> --vm-name <your VM name> --protected-settings wad_protected_settings.json --settings wad_public_settings.jsonNote: The exact command will be printed by the configure-wad.ps1 script.

Metadata for Azure Logs

The following table lists metadata fields for the Azure Logs integration with LM Logs. The integration looks for this data in the log records and adds the data to the logs along with the raw message string.

| Property | Description | LM Mapping | Default |

time | The timestamp (UTC) for the event. | timestamp | Yes |

level | Severity level for the event. Must be one of “Informational”, “Warning”, “Error”, or “Critical”. | severity | Yes |

operationName | Name of the operation that this event represents. | activity_type | Yes |

resourceId | Resource ID for the resource that emitted the event. For tenant services, this is in the form /tenants/tenant-id/providers/provider-name. When deploying the Azure integration using a template, you can add this field as a metadata key parameter to look for in the log record. | azure_resource_id | No |

category | Log category for the event. Typical log categories are “Audit”, “Operational”, “Execution”, and “Request”. | category | Yes |

ResourceType | Indicates from where the Azure logs are coming from. It also indicates where the service is deployed. | ResourceType | Yes |

Troubleshooting

Follow these steps to troubleshoot issues with your Azure logs integration.

1. Confirm that the install process provisioned all the required resources: an Event Hub, a resource group, a storage account, and an Azure Function.

2. Confirm that logs are being sent to the Event Hub:

- Navigate to your Event Hub in the Azure portal and check that the incoming messages count is greater than 0.

- You can also check this for specific agents or applications by looking in their Azure Logs folder. For example, if you are running a Windows VM with a IaaSDiagnostics extension, its logs will be in the following Azure Logs directory (with version and wadid specified):

C:\WindowsAzure\Logs\Plugins\Microsoft.Azure.Diagnostics.IaaSDiagnostics<VERSION><WADID>\Configuration

3. Confirm that the Azure Function is running and forwarding logs to LogicMonitor. For more information, see Enabling debug logging.

- If the Function App is running and receiving logs, but you are not seeing the logs in LogicMonitor, confirm that the LM_Access_Key or LM_Access_Id provided is correct.

- If the Function App is not being executed, but logs are sent to the Event Hub, try to run the Azure function locally and check if it receives any log messages:

- If the local function receives logs, stop and run the function on the Azure Portal. You can check its logs using the Azure CLI.

- If the local function does not receive logs, check its connection string and the shared access policy of the Event Hub.

4. You can use PowerShell to send a test event from the log-enabled VM. On the configured device, enter the PowerShell prompt and run the following command: eventcreate /Id 500 /D "test error event for windows" /T ERROR /L Application

After the command runs, you should see the event in the LM Logs page.

Updating Template Parameters

You may need to update the template after deployment, for example to change credentials or parameters. You can do this in the Function App configuration by navigating to Function app lm-logs-{LM_Company_name}-{resource_group_region} → configuration → edit.

Enabling Debug Logging

You can enable Application Insights in the Function App to check whether it is receiving logs. For more information, see Enable streaming execution logs.

You can configure the application logging type and level using the Azure CLI webapp log config command. Example:

az webapp log config --resource-group <Azure Function's Resource Group name> --name <Azure Function name> --application-logging true --level verbose --detailed-error-messages trueAfter configuring application logging, you can see the logs using Azure CLI webapp log tail. Example:

az webapp log tail --resource-group <Azure Function's Resource Group name> --name <Azure Function name>Removing Azure Resources

The Azure templates you ran to set up log ingestion create several resources, including the Event Hub which sends logs data to LM Logs. If needed, you can remove the LM Logs integration to stop the flow of data and associated costs.

Note: Before removing a resource group, ensure you have not added other non-LM Logs items into the group.

Do the following:

1. In your Azure portal, navigate to the monitored VM > Activity log > Diagnostic settings > Edit setting (for the Logs Event Hub) and select Delete.

2. Delete the Event Hub which has the name and region name that you created during setup. This will cut off the logs flow from Azure to LM Logs.

3. You can remove all other resources created by template deployment such as the Function App, Managed Identity, App Insight, and Storage account. The names of these will following the Event Hub naming convention from the template. You can remove each item individually, or if they are in a resource group, you can remove the entire group.

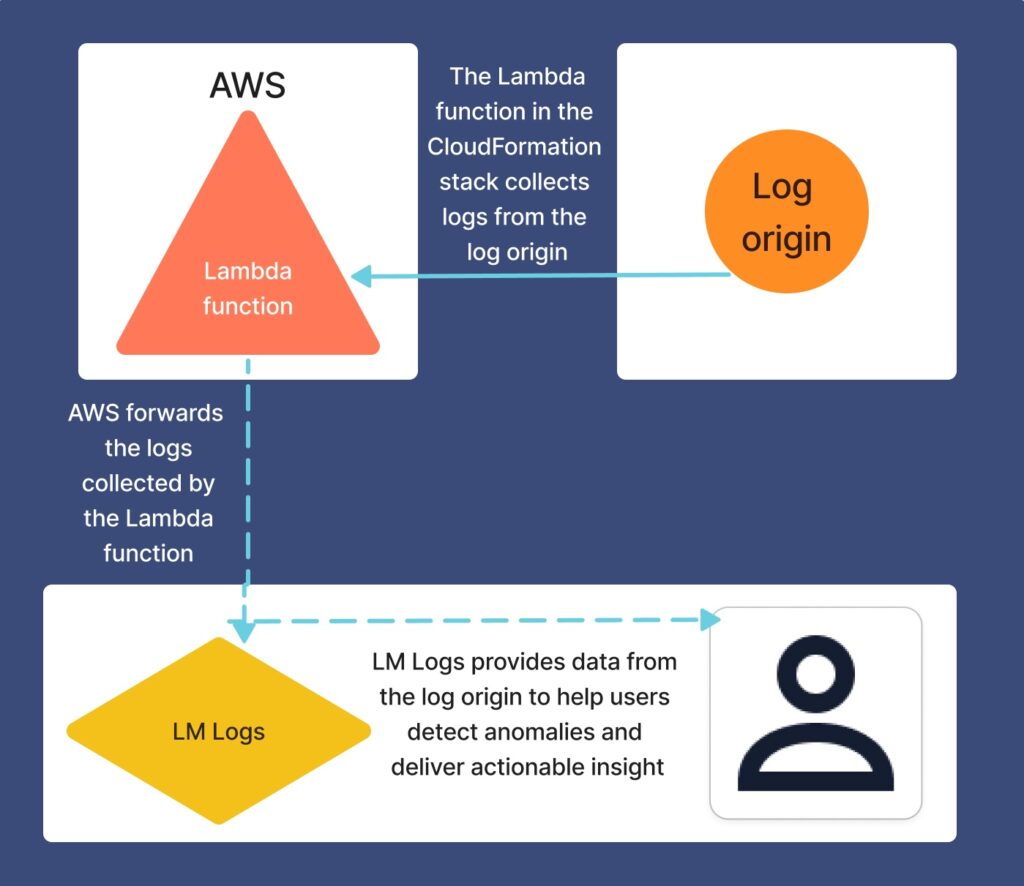

Using AWS CloudWatch to monitor your Lambda functions is a valuable method to obtain useful reporting and alerts. However, relying solely on AWS CloudWatch to monitor your data can leave you without in-depth insights and contextual information.

LogicMonitor integrates with your AWS services to provide detailed analysis of the performance of your Lambda functions. By utilizing a customizable template to configure and deploy Lambda functions that forward log data, LogicMonitor ingests the logs and then uses the data from them to detect anomalies and deliver actionable insights. For more information, see Lambda Function Deployment for AWS Logs Ingestion.

Review the following to see how log ingestion works when integrated with AWS using CloudFormation stacks:

Supported AWS Services for Log Ingestion

See the AWS documentation for the following supported AWS services:

- AWS EC2 Logs

- AWS ELB Access Logs

- AWS RDS Logs

- AWS Lambda Logs

- AWS EC2 Flow Logs

- AWS NAT Gateway Flow Logs

- AWS CloudTrail Logs

- AWS CloudFront Logs

- AWS Kinesis Data Streams Logs

- AWS Kinesis Data Firehose Logs

- AWS ECS Logs

- AWS EKS Logs

- AWS Bedrock Logs

- AWS EventBridge CloudWatch Events Logs

For information about how to configure each AWS service to LM Logs, see AWS Service Configuration for Log Ingestion.