IT Humans in the Loop:

The LogicMonitor Blog

The LogicMonitor Blog

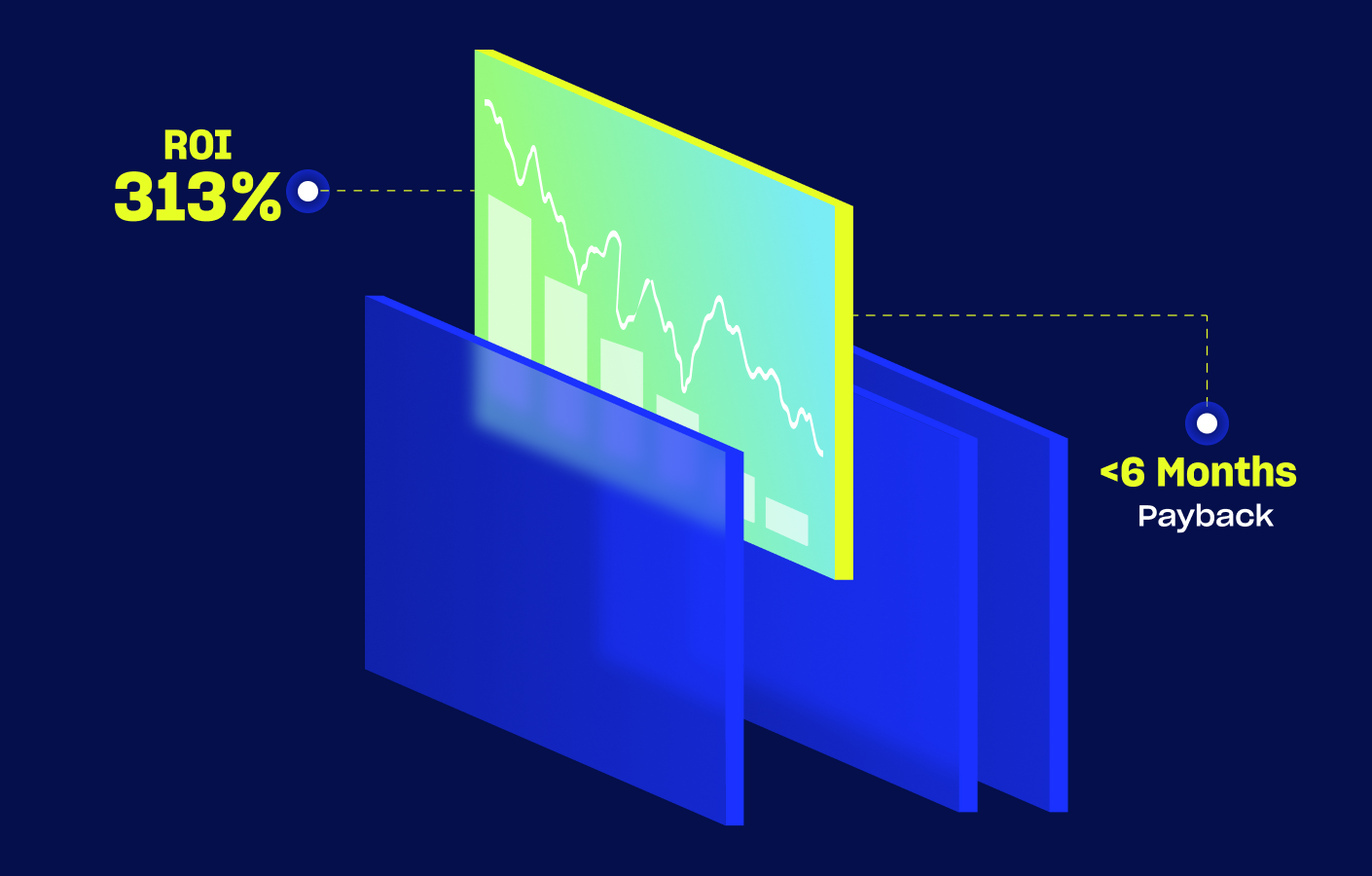

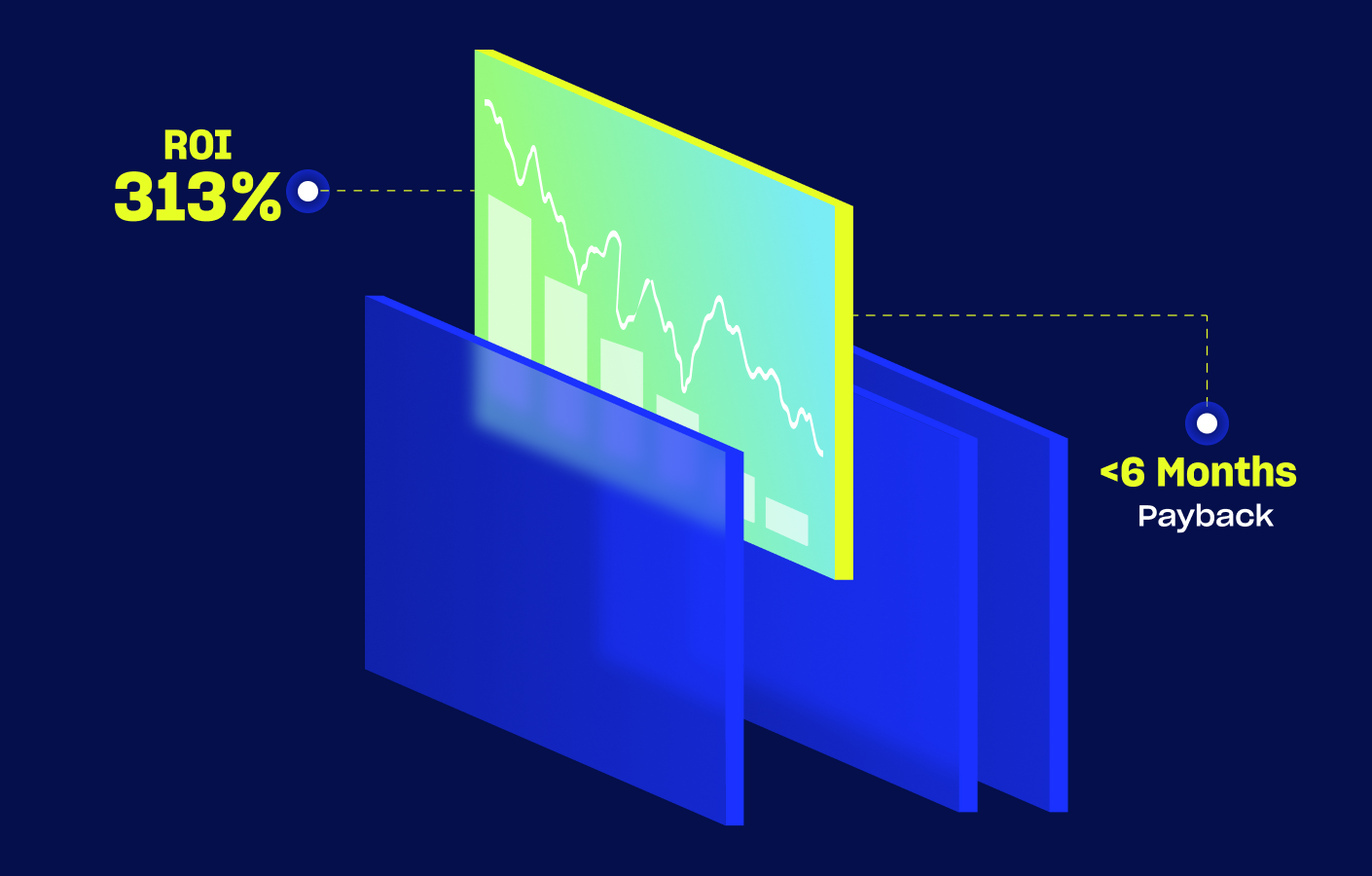

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

IT Humans in the Loop:

The LogicMonitor Blog

We’ll send you real talk, smart tips, and proven ways to make faster decisions and better calls right to your inbox.

Your video will begin shortly