Anomaly Detection for DevOps

Forrester Total Economic Impact™ study finds Edwin AI delivered a 313% ROI for composite organization.

Proactively manage modern hybrid environments with predictive insights, intelligent automation, and full-stack observability.

Explore solutionsExplore our resource library for IT pros. Get expert guides, observability strategies, and real-world insights to power smarter, AI-driven operations.

Explore resources

Our observability platform proactively delivers the insights and automation CIOs need to accelerate innovation.

About LogicMonitor

Get the latest blogs, whitepapers, eGuides, and more straight into your inbox.

Your video will begin shortly

Get Better Observability With Machine Learning Anomaly Detection.

DevOps teams today are challenged with the rapid growth and complexity of infrastructure. Managing those environments only through static thresholds becomes insufficient, so to address this issue, modern DevOps teams rely on advanced ML/AI algorithms.

LogicMonitor’s Anomaly Detection solution is part of our AIOps Early Warning System that provides context, meaningful alerts, illuminates patterns, and enables foresight and automation. All of this is done automatically, without exposure to ML/AI algorithms and parameters.

In this article we will cover:

The first step toward artificial Neural Networks came in 1943 when Warren McCulloch and Walter Pitts wrote a paper on how neurons might work. They even modeled a simple neural network with electrical circuits.

Other algorithms used to describe changes in time series using a mathematical approach (e.g. the ARIMA model by George Box and Gwilym Jenkins) were developed in the 1970s.

With that, only in the last few years has the computational power (in 2009 when GPUs started getting used for training Neural Network) and a huge amount of data available played a key role for efficient and accurate learning of the model.

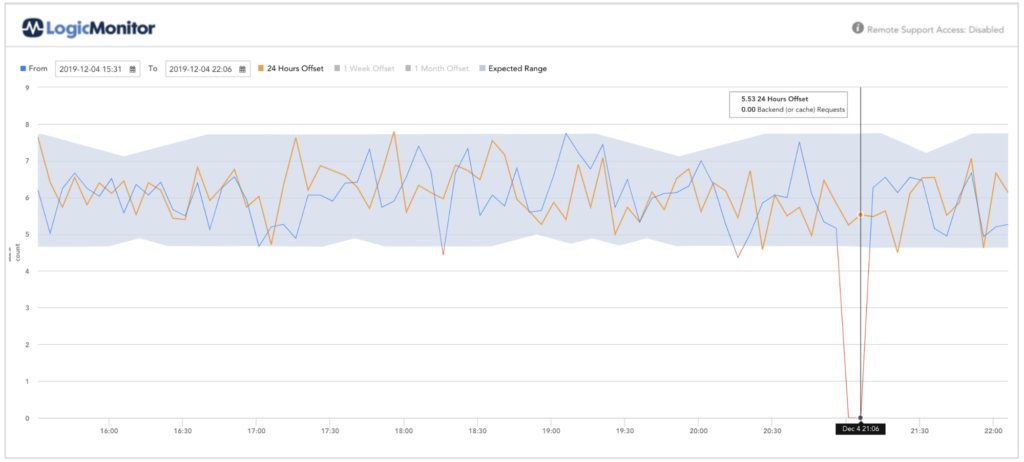

Comparing original time-series with one day-of-set (orange) and the expected range

Several anomaly detection techniques have been proposed in literature. Some of the popular techniques are Forests, Tensor-based, correlation-based, Neural Networks, Bayesian Networks, and deviations from association rules and frequent itemsets.

At LogicMonitor, our platform processes data in a stream, keeping the system agile so it can quickly adjust and use the right algorithm. We believe that a DevOps engineer should not need to become a data-scientist (we’ve hired a few). Our platform should do the hard work for you

Many competing monitoring platforms require DevOps teams to fill in certain parameters, but LogicMonitor takes away this burden and automatically answers the following questions:

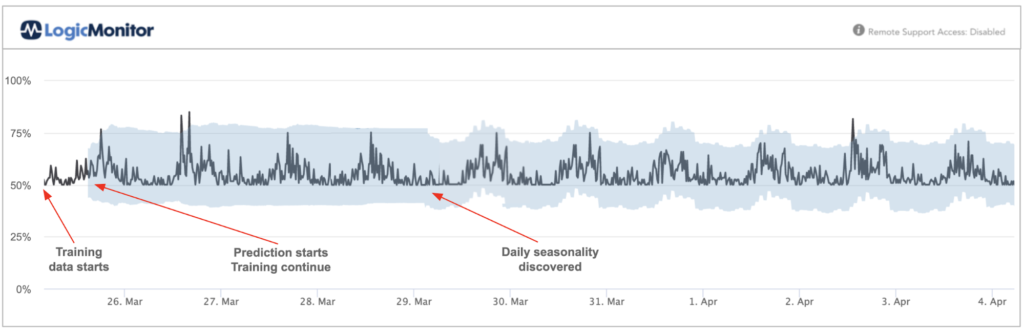

Workloads are classified automatically, and for each datapoint the Model is learning and adjusting. Transformers are used to handle seasonalities, shifts, etc. Once implemented, the Model warm-up times are as follows:

note: the daily seasonality is kicking in automatically in after 2.5 days

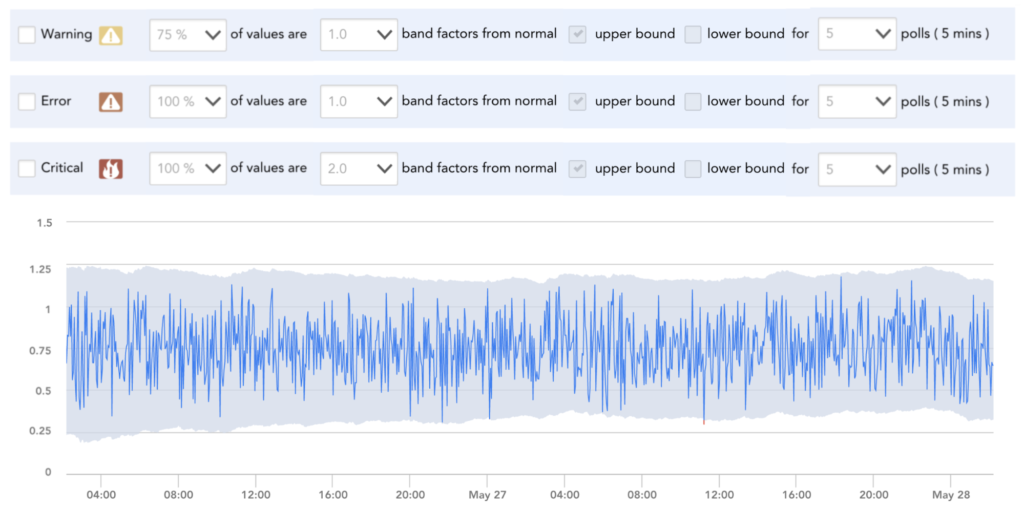

When setting up anomaly detection, users should not be exposed to ML/AI algorithms parameters. Adjusting algorithm sensitivity should be described in simple English.

In rare cases where tuning may be required, it is possible, but our philosophy is that burden should not be on the user by default and avoided in 99.9% of the scenarios.

© LogicMonitor 2026 | All rights reserved. | All trademarks, trade names, service marks, and logos referenced herein belong to their respective companies.